pax_global_header�����������������������������������������������������������������������������������0000666�0000000�0000000�00000000064�13606321373�0014516�g����������������������������������������������������������������������������������������������������ustar�00root����������������������������root����������������������������0000000�0000000������������������������������������������������������������������������������������������������������������������������������������������������������������������������52 comment=3edd9749699394e2961d3b9bd33d260aea5583c8

����������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������BerryNet-upstream-3.9.0/����������������������������������������������������������������������������0000775�0000000�0000000�00000000000�13606321373�0015117�5����������������������������������������������������������������������������������������������������ustar�00root����������������������������root����������������������������0000000�0000000������������������������������������������������������������������������������������������������������������������������������������������������������������������������BerryNet-upstream-3.9.0/.eslintrc.yaml��������������������������������������������������������������0000664�0000000�0000000�00000000135�13606321373�0017703�0����������������������������������������������������������������������������������������������������ustar�00root����������������������������root����������������������������0000000�0000000������������������������������������������������������������������������������������������������������������������������������������������������������������������������env:

node: true

es6: true

extends: google

rules:

comma-dangle: [2, only-multiline]

�����������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������BerryNet-upstream-3.9.0/.github/��������������������������������������������������������������������0000775�0000000�0000000�00000000000�13606321373�0016457�5����������������������������������������������������������������������������������������������������ustar�00root����������������������������root����������������������������0000000�0000000������������������������������������������������������������������������������������������������������������������������������������������������������������������������BerryNet-upstream-3.9.0/.github/FUNDING.yml���������������������������������������������������������0000664�0000000�0000000�00000001357�13606321373�0020302�0����������������������������������������������������������������������������������������������������ustar�00root����������������������������root����������������������������0000000�0000000������������������������������������������������������������������������������������������������������������������������������������������������������������������������# These are supported funding model platforms

github: # Replace with up to 4 GitHub Sponsors-enabled usernames e.g., [user1, user2]

patreon: # Replace with a single Patreon username

open_collective: berrynet # Replace with a single Open Collective username

ko_fi: # Replace with a single Ko-fi username

tidelift: # Replace with a single Tidelift platform-name/package-name e.g., npm/babel

community_bridge: # Replace with a single Community Bridge project-name e.g., cloud-foundry

liberapay: # Replace with a single Liberapay username

issuehunt: # Replace with a single IssueHunt username

otechie: # Replace with a single Otechie username

custom: ['https://paypal.me/berrynet'] # Replace with up to 4 custom sponsorship URLs e.g., ['link1', 'link2']

���������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������BerryNet-upstream-3.9.0/.gitignore������������������������������������������������������������������0000664�0000000�0000000�00000000200�13606321373�0017077�0����������������������������������������������������������������������������������������������������ustar�00root����������������������������root����������������������������0000000�0000000������������������������������������������������������������������������������������������������������������������������������������������������������������������������# Byte-compiled / optimized / DLL files

__pycache__

*.pyc

# Distribution / packaging

build

dist

*.egg-info

# Environments

env

������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������BerryNet-upstream-3.9.0/.gitlab-ci.yml��������������������������������������������������������������0000664�0000000�0000000�00000003331�13606321373�0017553�0����������������������������������������������������������������������������������������������������ustar�00root����������������������������root����������������������������0000000�0000000������������������������������������������������������������������������������������������������������������������������������������������������������������������������# Official language image. Look for the different tagged releases at:

# https://hub.docker.com/r/library/python/tags/

image: gitlab-ci:base

# Change pip's cache directory to be inside the project directory since we can

# only cache local items.

variables:

PIP_CACHE_DIR: "$CI_PROJECT_DIR/.cache"

# Pip's cache doesn't store the python packages

# https://pip.pypa.io/en/stable/reference/pip_install/#caching

#

# If you want to also cache the installed packages, you have to install

# them in a virtualenv and cache it as well.

cache:

paths:

- .cache/pip

stages:

- build

- test

- deploy

before_script:

- python3 -V # Print out python version for debugging

- eval $(ssh-agent -s)

- echo "$SSH_PRIVATE_KEY" | tr -d '\r' | ssh-add - > /dev/null

- mkdir -p ~/.ssh && chmod 700 ~/.ssh

- echo "$SSH_KNOWN_HOSTS" > ~/.ssh/known_hosts && chmod 644 ~/.ssh/known_hosts

build-wheel:

stage: build

script:

- pip3 wheel --wheel-dir=./dist .

artifacts:

paths:

- dist/

unit-test:

stage: test

dependencies:

- build-wheel

script:

- pip3 install dist/*.whl tensorflow

- apt update && apt install -y libsm6 libxrender-dev

- python3 setup.py test

allow_failure: true

pep8-codestyle:

stage: test

script:

- pycodestyle .

allow_failure: true

pylint-codestyle:

stage: test

script:

- pylint berrynet

allow_failure: true

deploy-pypi:

stage: deploy

dependencies:

- build-wheel

script:

- git clone git@gitlab.com:DT42/infrastructure42/dt42pypi.git

- cp dist/berrynet*.whl dt42pypi/ && cd dt42pypi

- dir2pi -n .

- git add . && git commit -m "Add $CI_PROJECT_NAME $CI_COMMIT_TAG wheel. This is an auto-commit by GitLab-CI Runner."

- git pull && git push

only:

- tags

�������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������BerryNet-upstream-3.9.0/.gitmodules�����������������������������������������������������������������0000664�0000000�0000000�00000000153�13606321373�0017273�0����������������������������������������������������������������������������������������������������ustar�00root����������������������������root����������������������������0000000�0000000������������������������������������������������������������������������������������������������������������������������������������������������������������������������[submodule "inference/darkflow"]

path = inference/darkflow

url = https://github.com/thtrieu/darkflow.git

���������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������BerryNet-upstream-3.9.0/AUTHORS���������������������������������������������������������������������0000664�0000000�0000000�00000000554�13606321373�0016173�0����������������������������������������������������������������������������������������������������ustar�00root����������������������������root����������������������������0000000�0000000������������������������������������������������������������������������������������������������������������������������������������������������������������������������# Authors and contributors ordered by first contribution.

Bofu Chen - bofu AT dt42 dot io

Joseph Liu - joseph AT dt42 dot io

Kai-Heng Feng - khfeng AT dt42 dot io

Tammy Yang - tammy AT dt42 dot io

Paul Liu - paulliu AT debian dot org

Katsuya Hyodo (PINTO0309) - rmsdh122 AT yahoo dot co dot jp

Sherry Chung - sherry AT dt42 dot io

Mei Mei - meimei AT dt42 dot io

����������������������������������������������������������������������������������������������������������������������������������������������������BerryNet-upstream-3.9.0/BACKERS.md������������������������������������������������������������������0000664�0000000�0000000�00000001116�13606321373�0016512�0����������������������������������������������������������������������������������������������������ustar�00root����������������������������root����������������������������0000000�0000000������������������������������������������������������������������������������������������������������������������������������������������������������������������������

Sponsors & Backers

BerryNet is a GPL-licensed FLOSS project. It's an independent project with its ongoing development made possible entirely thanks to the support by these awesome [backers](https://github.com/DT42/BerryNet/blob/master/BACKERS.md). If you'd like to join them, please consider:

* [Become a backer or sponsor on Open Collective](https://opencollective.com/berrynet).

* [One-time donation via PayPal or crypto-currencies.](https://github.com/DT42/BerryNet/wiki/Donation#one-time-donations)

One-Time Donations

* Penk Chen

��������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������BerryNet-upstream-3.9.0/CONTRIBUTING.md�������������������������������������������������������������0000664�0000000�0000000�00000000304�13606321373�0017345�0����������������������������������������������������������������������������������������������������ustar�00root����������������������������root����������������������������0000000�0000000������������������������������������������������������������������������������������������������������������������������������������������������������������������������We use [Developer Certificate of Origin](https://developercertificate.org/).

To use DCO, you only need to add your signature into a Git commit:

```

$ git commit -s -m "your commit message."

```

����������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������BerryNet-upstream-3.9.0/LICENSE.txt�����������������������������������������������������������������0000664�0000000�0000000�00000104513�13606321373�0016746�0����������������������������������������������������������������������������������������������������ustar�00root����������������������������root����������������������������0000000�0000000������������������������������������������������������������������������������������������������������������������������������������������������������������������������ GNU GENERAL PUBLIC LICENSE

Version 3, 29 June 2007

Copyright (C) 2007 Free Software Foundation, Inc.

Everyone is permitted to copy and distribute verbatim copies

of this license document, but changing it is not allowed.

Preamble

The GNU General Public License is a free, copyleft license for

software and other kinds of works.

The licenses for most software and other practical works are designed

to take away your freedom to share and change the works. By contrast,

the GNU General Public License is intended to guarantee your freedom to

share and change all versions of a program--to make sure it remains free

software for all its users. We, the Free Software Foundation, use the

GNU General Public License for most of our software; it applies also to

any other work released this way by its authors. You can apply it to

your programs, too.

When we speak of free software, we are referring to freedom, not

price. Our General Public Licenses are designed to make sure that you

have the freedom to distribute copies of free software (and charge for

them if you wish), that you receive source code or can get it if you

want it, that you can change the software or use pieces of it in new

free programs, and that you know you can do these things.

To protect your rights, we need to prevent others from denying you

these rights or asking you to surrender the rights. Therefore, you have

certain responsibilities if you distribute copies of the software, or if

you modify it: responsibilities to respect the freedom of others.

For example, if you distribute copies of such a program, whether

gratis or for a fee, you must pass on to the recipients the same

freedoms that you received. You must make sure that they, too, receive

or can get the source code. And you must show them these terms so they

know their rights.

Developers that use the GNU GPL protect your rights with two steps:

(1) assert copyright on the software, and (2) offer you this License

giving you legal permission to copy, distribute and/or modify it.

For the developers' and authors' protection, the GPL clearly explains

that there is no warranty for this free software. For both users' and

authors' sake, the GPL requires that modified versions be marked as

changed, so that their problems will not be attributed erroneously to

authors of previous versions.

Some devices are designed to deny users access to install or run

modified versions of the software inside them, although the manufacturer

can do so. This is fundamentally incompatible with the aim of

protecting users' freedom to change the software. The systematic

pattern of such abuse occurs in the area of products for individuals to

use, which is precisely where it is most unacceptable. Therefore, we

have designed this version of the GPL to prohibit the practice for those

products. If such problems arise substantially in other domains, we

stand ready to extend this provision to those domains in future versions

of the GPL, as needed to protect the freedom of users.

Finally, every program is threatened constantly by software patents.

States should not allow patents to restrict development and use of

software on general-purpose computers, but in those that do, we wish to

avoid the special danger that patents applied to a free program could

make it effectively proprietary. To prevent this, the GPL assures that

patents cannot be used to render the program non-free.

The precise terms and conditions for copying, distribution and

modification follow.

TERMS AND CONDITIONS

0. Definitions.

"This License" refers to version 3 of the GNU General Public License.

"Copyright" also means copyright-like laws that apply to other kinds of

works, such as semiconductor masks.

"The Program" refers to any copyrightable work licensed under this

License. Each licensee is addressed as "you". "Licensees" and

"recipients" may be individuals or organizations.

To "modify" a work means to copy from or adapt all or part of the work

in a fashion requiring copyright permission, other than the making of an

exact copy. The resulting work is called a "modified version" of the

earlier work or a work "based on" the earlier work.

A "covered work" means either the unmodified Program or a work based

on the Program.

To "propagate" a work means to do anything with it that, without

permission, would make you directly or secondarily liable for

infringement under applicable copyright law, except executing it on a

computer or modifying a private copy. Propagation includes copying,

distribution (with or without modification), making available to the

public, and in some countries other activities as well.

To "convey" a work means any kind of propagation that enables other

parties to make or receive copies. Mere interaction with a user through

a computer network, with no transfer of a copy, is not conveying.

An interactive user interface displays "Appropriate Legal Notices"

to the extent that it includes a convenient and prominently visible

feature that (1) displays an appropriate copyright notice, and (2)

tells the user that there is no warranty for the work (except to the

extent that warranties are provided), that licensees may convey the

work under this License, and how to view a copy of this License. If

the interface presents a list of user commands or options, such as a

menu, a prominent item in the list meets this criterion.

1. Source Code.

The "source code" for a work means the preferred form of the work

for making modifications to it. "Object code" means any non-source

form of a work.

A "Standard Interface" means an interface that either is an official

standard defined by a recognized standards body, or, in the case of

interfaces specified for a particular programming language, one that

is widely used among developers working in that language.

The "System Libraries" of an executable work include anything, other

than the work as a whole, that (a) is included in the normal form of

packaging a Major Component, but which is not part of that Major

Component, and (b) serves only to enable use of the work with that

Major Component, or to implement a Standard Interface for which an

implementation is available to the public in source code form. A

"Major Component", in this context, means a major essential component

(kernel, window system, and so on) of the specific operating system

(if any) on which the executable work runs, or a compiler used to

produce the work, or an object code interpreter used to run it.

The "Corresponding Source" for a work in object code form means all

the source code needed to generate, install, and (for an executable

work) run the object code and to modify the work, including scripts to

control those activities. However, it does not include the work's

System Libraries, or general-purpose tools or generally available free

programs which are used unmodified in performing those activities but

which are not part of the work. For example, Corresponding Source

includes interface definition files associated with source files for

the work, and the source code for shared libraries and dynamically

linked subprograms that the work is specifically designed to require,

such as by intimate data communication or control flow between those

subprograms and other parts of the work.

The Corresponding Source need not include anything that users

can regenerate automatically from other parts of the Corresponding

Source.

The Corresponding Source for a work in source code form is that

same work.

2. Basic Permissions.

All rights granted under this License are granted for the term of

copyright on the Program, and are irrevocable provided the stated

conditions are met. This License explicitly affirms your unlimited

permission to run the unmodified Program. The output from running a

covered work is covered by this License only if the output, given its

content, constitutes a covered work. This License acknowledges your

rights of fair use or other equivalent, as provided by copyright law.

You may make, run and propagate covered works that you do not

convey, without conditions so long as your license otherwise remains

in force. You may convey covered works to others for the sole purpose

of having them make modifications exclusively for you, or provide you

with facilities for running those works, provided that you comply with

the terms of this License in conveying all material for which you do

not control copyright. Those thus making or running the covered works

for you must do so exclusively on your behalf, under your direction

and control, on terms that prohibit them from making any copies of

your copyrighted material outside their relationship with you.

Conveying under any other circumstances is permitted solely under

the conditions stated below. Sublicensing is not allowed; section 10

makes it unnecessary.

3. Protecting Users' Legal Rights From Anti-Circumvention Law.

No covered work shall be deemed part of an effective technological

measure under any applicable law fulfilling obligations under article

11 of the WIPO copyright treaty adopted on 20 December 1996, or

similar laws prohibiting or restricting circumvention of such

measures.

When you convey a covered work, you waive any legal power to forbid

circumvention of technological measures to the extent such circumvention

is effected by exercising rights under this License with respect to

the covered work, and you disclaim any intention to limit operation or

modification of the work as a means of enforcing, against the work's

users, your or third parties' legal rights to forbid circumvention of

technological measures.

4. Conveying Verbatim Copies.

You may convey verbatim copies of the Program's source code as you

receive it, in any medium, provided that you conspicuously and

appropriately publish on each copy an appropriate copyright notice;

keep intact all notices stating that this License and any

non-permissive terms added in accord with section 7 apply to the code;

keep intact all notices of the absence of any warranty; and give all

recipients a copy of this License along with the Program.

You may charge any price or no price for each copy that you convey,

and you may offer support or warranty protection for a fee.

5. Conveying Modified Source Versions.

You may convey a work based on the Program, or the modifications to

produce it from the Program, in the form of source code under the

terms of section 4, provided that you also meet all of these conditions:

a) The work must carry prominent notices stating that you modified

it, and giving a relevant date.

b) The work must carry prominent notices stating that it is

released under this License and any conditions added under section

7. This requirement modifies the requirement in section 4 to

"keep intact all notices".

c) You must license the entire work, as a whole, under this

License to anyone who comes into possession of a copy. This

License will therefore apply, along with any applicable section 7

additional terms, to the whole of the work, and all its parts,

regardless of how they are packaged. This License gives no

permission to license the work in any other way, but it does not

invalidate such permission if you have separately received it.

d) If the work has interactive user interfaces, each must display

Appropriate Legal Notices; however, if the Program has interactive

interfaces that do not display Appropriate Legal Notices, your

work need not make them do so.

A compilation of a covered work with other separate and independent

works, which are not by their nature extensions of the covered work,

and which are not combined with it such as to form a larger program,

in or on a volume of a storage or distribution medium, is called an

"aggregate" if the compilation and its resulting copyright are not

used to limit the access or legal rights of the compilation's users

beyond what the individual works permit. Inclusion of a covered work

in an aggregate does not cause this License to apply to the other

parts of the aggregate.

6. Conveying Non-Source Forms.

You may convey a covered work in object code form under the terms

of sections 4 and 5, provided that you also convey the

machine-readable Corresponding Source under the terms of this License,

in one of these ways:

a) Convey the object code in, or embodied in, a physical product

(including a physical distribution medium), accompanied by the

Corresponding Source fixed on a durable physical medium

customarily used for software interchange.

b) Convey the object code in, or embodied in, a physical product

(including a physical distribution medium), accompanied by a

written offer, valid for at least three years and valid for as

long as you offer spare parts or customer support for that product

model, to give anyone who possesses the object code either (1) a

copy of the Corresponding Source for all the software in the

product that is covered by this License, on a durable physical

medium customarily used for software interchange, for a price no

more than your reasonable cost of physically performing this

conveying of source, or (2) access to copy the

Corresponding Source from a network server at no charge.

c) Convey individual copies of the object code with a copy of the

written offer to provide the Corresponding Source. This

alternative is allowed only occasionally and noncommercially, and

only if you received the object code with such an offer, in accord

with subsection 6b.

d) Convey the object code by offering access from a designated

place (gratis or for a charge), and offer equivalent access to the

Corresponding Source in the same way through the same place at no

further charge. You need not require recipients to copy the

Corresponding Source along with the object code. If the place to

copy the object code is a network server, the Corresponding Source

may be on a different server (operated by you or a third party)

that supports equivalent copying facilities, provided you maintain

clear directions next to the object code saying where to find the

Corresponding Source. Regardless of what server hosts the

Corresponding Source, you remain obligated to ensure that it is

available for as long as needed to satisfy these requirements.

e) Convey the object code using peer-to-peer transmission, provided

you inform other peers where the object code and Corresponding

Source of the work are being offered to the general public at no

charge under subsection 6d.

A separable portion of the object code, whose source code is excluded

from the Corresponding Source as a System Library, need not be

included in conveying the object code work.

A "User Product" is either (1) a "consumer product", which means any

tangible personal property which is normally used for personal, family,

or household purposes, or (2) anything designed or sold for incorporation

into a dwelling. In determining whether a product is a consumer product,

doubtful cases shall be resolved in favor of coverage. For a particular

product received by a particular user, "normally used" refers to a

typical or common use of that class of product, regardless of the status

of the particular user or of the way in which the particular user

actually uses, or expects or is expected to use, the product. A product

is a consumer product regardless of whether the product has substantial

commercial, industrial or non-consumer uses, unless such uses represent

the only significant mode of use of the product.

"Installation Information" for a User Product means any methods,

procedures, authorization keys, or other information required to install

and execute modified versions of a covered work in that User Product from

a modified version of its Corresponding Source. The information must

suffice to ensure that the continued functioning of the modified object

code is in no case prevented or interfered with solely because

modification has been made.

If you convey an object code work under this section in, or with, or

specifically for use in, a User Product, and the conveying occurs as

part of a transaction in which the right of possession and use of the

User Product is transferred to the recipient in perpetuity or for a

fixed term (regardless of how the transaction is characterized), the

Corresponding Source conveyed under this section must be accompanied

by the Installation Information. But this requirement does not apply

if neither you nor any third party retains the ability to install

modified object code on the User Product (for example, the work has

been installed in ROM).

The requirement to provide Installation Information does not include a

requirement to continue to provide support service, warranty, or updates

for a work that has been modified or installed by the recipient, or for

the User Product in which it has been modified or installed. Access to a

network may be denied when the modification itself materially and

adversely affects the operation of the network or violates the rules and

protocols for communication across the network.

Corresponding Source conveyed, and Installation Information provided,

in accord with this section must be in a format that is publicly

documented (and with an implementation available to the public in

source code form), and must require no special password or key for

unpacking, reading or copying.

7. Additional Terms.

"Additional permissions" are terms that supplement the terms of this

License by making exceptions from one or more of its conditions.

Additional permissions that are applicable to the entire Program shall

be treated as though they were included in this License, to the extent

that they are valid under applicable law. If additional permissions

apply only to part of the Program, that part may be used separately

under those permissions, but the entire Program remains governed by

this License without regard to the additional permissions.

When you convey a copy of a covered work, you may at your option

remove any additional permissions from that copy, or from any part of

it. (Additional permissions may be written to require their own

removal in certain cases when you modify the work.) You may place

additional permissions on material, added by you to a covered work,

for which you have or can give appropriate copyright permission.

Notwithstanding any other provision of this License, for material you

add to a covered work, you may (if authorized by the copyright holders of

that material) supplement the terms of this License with terms:

a) Disclaiming warranty or limiting liability differently from the

terms of sections 15 and 16 of this License; or

b) Requiring preservation of specified reasonable legal notices or

author attributions in that material or in the Appropriate Legal

Notices displayed by works containing it; or

c) Prohibiting misrepresentation of the origin of that material, or

requiring that modified versions of such material be marked in

reasonable ways as different from the original version; or

d) Limiting the use for publicity purposes of names of licensors or

authors of the material; or

e) Declining to grant rights under trademark law for use of some

trade names, trademarks, or service marks; or

f) Requiring indemnification of licensors and authors of that

material by anyone who conveys the material (or modified versions of

it) with contractual assumptions of liability to the recipient, for

any liability that these contractual assumptions directly impose on

those licensors and authors.

All other non-permissive additional terms are considered "further

restrictions" within the meaning of section 10. If the Program as you

received it, or any part of it, contains a notice stating that it is

governed by this License along with a term that is a further

restriction, you may remove that term. If a license document contains

a further restriction but permits relicensing or conveying under this

License, you may add to a covered work material governed by the terms

of that license document, provided that the further restriction does

not survive such relicensing or conveying.

If you add terms to a covered work in accord with this section, you

must place, in the relevant source files, a statement of the

additional terms that apply to those files, or a notice indicating

where to find the applicable terms.

Additional terms, permissive or non-permissive, may be stated in the

form of a separately written license, or stated as exceptions;

the above requirements apply either way.

8. Termination.

You may not propagate or modify a covered work except as expressly

provided under this License. Any attempt otherwise to propagate or

modify it is void, and will automatically terminate your rights under

this License (including any patent licenses granted under the third

paragraph of section 11).

However, if you cease all violation of this License, then your

license from a particular copyright holder is reinstated (a)

provisionally, unless and until the copyright holder explicitly and

finally terminates your license, and (b) permanently, if the copyright

holder fails to notify you of the violation by some reasonable means

prior to 60 days after the cessation.

Moreover, your license from a particular copyright holder is

reinstated permanently if the copyright holder notifies you of the

violation by some reasonable means, this is the first time you have

received notice of violation of this License (for any work) from that

copyright holder, and you cure the violation prior to 30 days after

your receipt of the notice.

Termination of your rights under this section does not terminate the

licenses of parties who have received copies or rights from you under

this License. If your rights have been terminated and not permanently

reinstated, you do not qualify to receive new licenses for the same

material under section 10.

9. Acceptance Not Required for Having Copies.

You are not required to accept this License in order to receive or

run a copy of the Program. Ancillary propagation of a covered work

occurring solely as a consequence of using peer-to-peer transmission

to receive a copy likewise does not require acceptance. However,

nothing other than this License grants you permission to propagate or

modify any covered work. These actions infringe copyright if you do

not accept this License. Therefore, by modifying or propagating a

covered work, you indicate your acceptance of this License to do so.

10. Automatic Licensing of Downstream Recipients.

Each time you convey a covered work, the recipient automatically

receives a license from the original licensors, to run, modify and

propagate that work, subject to this License. You are not responsible

for enforcing compliance by third parties with this License.

An "entity transaction" is a transaction transferring control of an

organization, or substantially all assets of one, or subdividing an

organization, or merging organizations. If propagation of a covered

work results from an entity transaction, each party to that

transaction who receives a copy of the work also receives whatever

licenses to the work the party's predecessor in interest had or could

give under the previous paragraph, plus a right to possession of the

Corresponding Source of the work from the predecessor in interest, if

the predecessor has it or can get it with reasonable efforts.

You may not impose any further restrictions on the exercise of the

rights granted or affirmed under this License. For example, you may

not impose a license fee, royalty, or other charge for exercise of

rights granted under this License, and you may not initiate litigation

(including a cross-claim or counterclaim in a lawsuit) alleging that

any patent claim is infringed by making, using, selling, offering for

sale, or importing the Program or any portion of it.

11. Patents.

A "contributor" is a copyright holder who authorizes use under this

License of the Program or a work on which the Program is based. The

work thus licensed is called the contributor's "contributor version".

A contributor's "essential patent claims" are all patent claims

owned or controlled by the contributor, whether already acquired or

hereafter acquired, that would be infringed by some manner, permitted

by this License, of making, using, or selling its contributor version,

but do not include claims that would be infringed only as a

consequence of further modification of the contributor version. For

purposes of this definition, "control" includes the right to grant

patent sublicenses in a manner consistent with the requirements of

this License.

Each contributor grants you a non-exclusive, worldwide, royalty-free

patent license under the contributor's essential patent claims, to

make, use, sell, offer for sale, import and otherwise run, modify and

propagate the contents of its contributor version.

In the following three paragraphs, a "patent license" is any express

agreement or commitment, however denominated, not to enforce a patent

(such as an express permission to practice a patent or covenant not to

sue for patent infringement). To "grant" such a patent license to a

party means to make such an agreement or commitment not to enforce a

patent against the party.

If you convey a covered work, knowingly relying on a patent license,

and the Corresponding Source of the work is not available for anyone

to copy, free of charge and under the terms of this License, through a

publicly available network server or other readily accessible means,

then you must either (1) cause the Corresponding Source to be so

available, or (2) arrange to deprive yourself of the benefit of the

patent license for this particular work, or (3) arrange, in a manner

consistent with the requirements of this License, to extend the patent

license to downstream recipients. "Knowingly relying" means you have

actual knowledge that, but for the patent license, your conveying the

covered work in a country, or your recipient's use of the covered work

in a country, would infringe one or more identifiable patents in that

country that you have reason to believe are valid.

If, pursuant to or in connection with a single transaction or

arrangement, you convey, or propagate by procuring conveyance of, a

covered work, and grant a patent license to some of the parties

receiving the covered work authorizing them to use, propagate, modify

or convey a specific copy of the covered work, then the patent license

you grant is automatically extended to all recipients of the covered

work and works based on it.

A patent license is "discriminatory" if it does not include within

the scope of its coverage, prohibits the exercise of, or is

conditioned on the non-exercise of one or more of the rights that are

specifically granted under this License. You may not convey a covered

work if you are a party to an arrangement with a third party that is

in the business of distributing software, under which you make payment

to the third party based on the extent of your activity of conveying

the work, and under which the third party grants, to any of the

parties who would receive the covered work from you, a discriminatory

patent license (a) in connection with copies of the covered work

conveyed by you (or copies made from those copies), or (b) primarily

for and in connection with specific products or compilations that

contain the covered work, unless you entered into that arrangement,

or that patent license was granted, prior to 28 March 2007.

Nothing in this License shall be construed as excluding or limiting

any implied license or other defenses to infringement that may

otherwise be available to you under applicable patent law.

12. No Surrender of Others' Freedom.

If conditions are imposed on you (whether by court order, agreement or

otherwise) that contradict the conditions of this License, they do not

excuse you from the conditions of this License. If you cannot convey a

covered work so as to satisfy simultaneously your obligations under this

License and any other pertinent obligations, then as a consequence you may

not convey it at all. For example, if you agree to terms that obligate you

to collect a royalty for further conveying from those to whom you convey

the Program, the only way you could satisfy both those terms and this

License would be to refrain entirely from conveying the Program.

13. Use with the GNU Affero General Public License.

Notwithstanding any other provision of this License, you have

permission to link or combine any covered work with a work licensed

under version 3 of the GNU Affero General Public License into a single

combined work, and to convey the resulting work. The terms of this

License will continue to apply to the part which is the covered work,

but the special requirements of the GNU Affero General Public License,

section 13, concerning interaction through a network will apply to the

combination as such.

14. Revised Versions of this License.

The Free Software Foundation may publish revised and/or new versions of

the GNU General Public License from time to time. Such new versions will

be similar in spirit to the present version, but may differ in detail to

address new problems or concerns.

Each version is given a distinguishing version number. If the

Program specifies that a certain numbered version of the GNU General

Public License "or any later version" applies to it, you have the

option of following the terms and conditions either of that numbered

version or of any later version published by the Free Software

Foundation. If the Program does not specify a version number of the

GNU General Public License, you may choose any version ever published

by the Free Software Foundation.

If the Program specifies that a proxy can decide which future

versions of the GNU General Public License can be used, that proxy's

public statement of acceptance of a version permanently authorizes you

to choose that version for the Program.

Later license versions may give you additional or different

permissions. However, no additional obligations are imposed on any

author or copyright holder as a result of your choosing to follow a

later version.

15. Disclaimer of Warranty.

THERE IS NO WARRANTY FOR THE PROGRAM, TO THE EXTENT PERMITTED BY

APPLICABLE LAW. EXCEPT WHEN OTHERWISE STATED IN WRITING THE COPYRIGHT

HOLDERS AND/OR OTHER PARTIES PROVIDE THE PROGRAM "AS IS" WITHOUT WARRANTY

OF ANY KIND, EITHER EXPRESSED OR IMPLIED, INCLUDING, BUT NOT LIMITED TO,

THE IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR

PURPOSE. THE ENTIRE RISK AS TO THE QUALITY AND PERFORMANCE OF THE PROGRAM

IS WITH YOU. SHOULD THE PROGRAM PROVE DEFECTIVE, YOU ASSUME THE COST OF

ALL NECESSARY SERVICING, REPAIR OR CORRECTION.

16. Limitation of Liability.

IN NO EVENT UNLESS REQUIRED BY APPLICABLE LAW OR AGREED TO IN WRITING

WILL ANY COPYRIGHT HOLDER, OR ANY OTHER PARTY WHO MODIFIES AND/OR CONVEYS

THE PROGRAM AS PERMITTED ABOVE, BE LIABLE TO YOU FOR DAMAGES, INCLUDING ANY

GENERAL, SPECIAL, INCIDENTAL OR CONSEQUENTIAL DAMAGES ARISING OUT OF THE

USE OR INABILITY TO USE THE PROGRAM (INCLUDING BUT NOT LIMITED TO LOSS OF

DATA OR DATA BEING RENDERED INACCURATE OR LOSSES SUSTAINED BY YOU OR THIRD

PARTIES OR A FAILURE OF THE PROGRAM TO OPERATE WITH ANY OTHER PROGRAMS),

EVEN IF SUCH HOLDER OR OTHER PARTY HAS BEEN ADVISED OF THE POSSIBILITY OF

SUCH DAMAGES.

17. Interpretation of Sections 15 and 16.

If the disclaimer of warranty and limitation of liability provided

above cannot be given local legal effect according to their terms,

reviewing courts shall apply local law that most closely approximates

an absolute waiver of all civil liability in connection with the

Program, unless a warranty or assumption of liability accompanies a

copy of the Program in return for a fee.

END OF TERMS AND CONDITIONS

How to Apply These Terms to Your New Programs

If you develop a new program, and you want it to be of the greatest

possible use to the public, the best way to achieve this is to make it

free software which everyone can redistribute and change under these terms.

To do so, attach the following notices to the program. It is safest

to attach them to the start of each source file to most effectively

state the exclusion of warranty; and each file should have at least

the "copyright" line and a pointer to where the full notice is found.

Copyright (C)

This program is free software: you can redistribute it and/or modify

it under the terms of the GNU General Public License as published by

the Free Software Foundation, either version 3 of the License, or

(at your option) any later version.

This program is distributed in the hope that it will be useful,

but WITHOUT ANY WARRANTY; without even the implied warranty of

MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the

GNU General Public License for more details.

You should have received a copy of the GNU General Public License

along with this program. If not, see .

Also add information on how to contact you by electronic and paper mail.

If the program does terminal interaction, make it output a short

notice like this when it starts in an interactive mode:

Copyright (C)

This program comes with ABSOLUTELY NO WARRANTY; for details type `show w'.

This is free software, and you are welcome to redistribute it

under certain conditions; type `show c' for details.

The hypothetical commands `show w' and `show c' should show the appropriate

parts of the General Public License. Of course, your program's commands

might be different; for a GUI interface, you would use an "about box".

You should also get your employer (if you work as a programmer) or school,

if any, to sign a "copyright disclaimer" for the program, if necessary.

For more information on this, and how to apply and follow the GNU GPL, see

.

The GNU General Public License does not permit incorporating your program

into proprietary programs. If your program is a subroutine library, you

may consider it more useful to permit linking proprietary applications with

the library. If this is what you want to do, use the GNU Lesser General

Public License instead of this License. But first, please read

.

�������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������BerryNet-upstream-3.9.0/README.md�������������������������������������������������������������������0000664�0000000�0000000�00000020557�13606321373�0016407�0����������������������������������������������������������������������������������������������������ustar�00root����������������������������root����������������������������0000000�0000000������������������������������������������������������������������������������������������������������������������������������������������������������������������������

Deep Learning Gateway on Raspberry Pi And Other Edge Devices

[Supporting BerryNet](https://github.com/DT42/BerryNet/wiki/Donation)

* [Become a backer or sponsor on Open Collective](https://opencollective.com/berrynet).

* [One-time donation via PayPal or crypto-currencies](https://github.com/DT42/BerryNet/wiki/Donation#one-time-donations).

# Introduction

This project turns edge devices such as Raspberry Pi into an intelligent gateway with deep learning running on it. No internet connection is required, everything is done locally on the edge device itself. Further, multiple edge devices can create a distributed AIoT network.

At DT42, we believe that bringing deep learning to edge devices is the trend towards the future. It not only saves costs of data transmission and storage but also makes devices able to respond according to the events shown in the images or videos without connecting to the cloud.

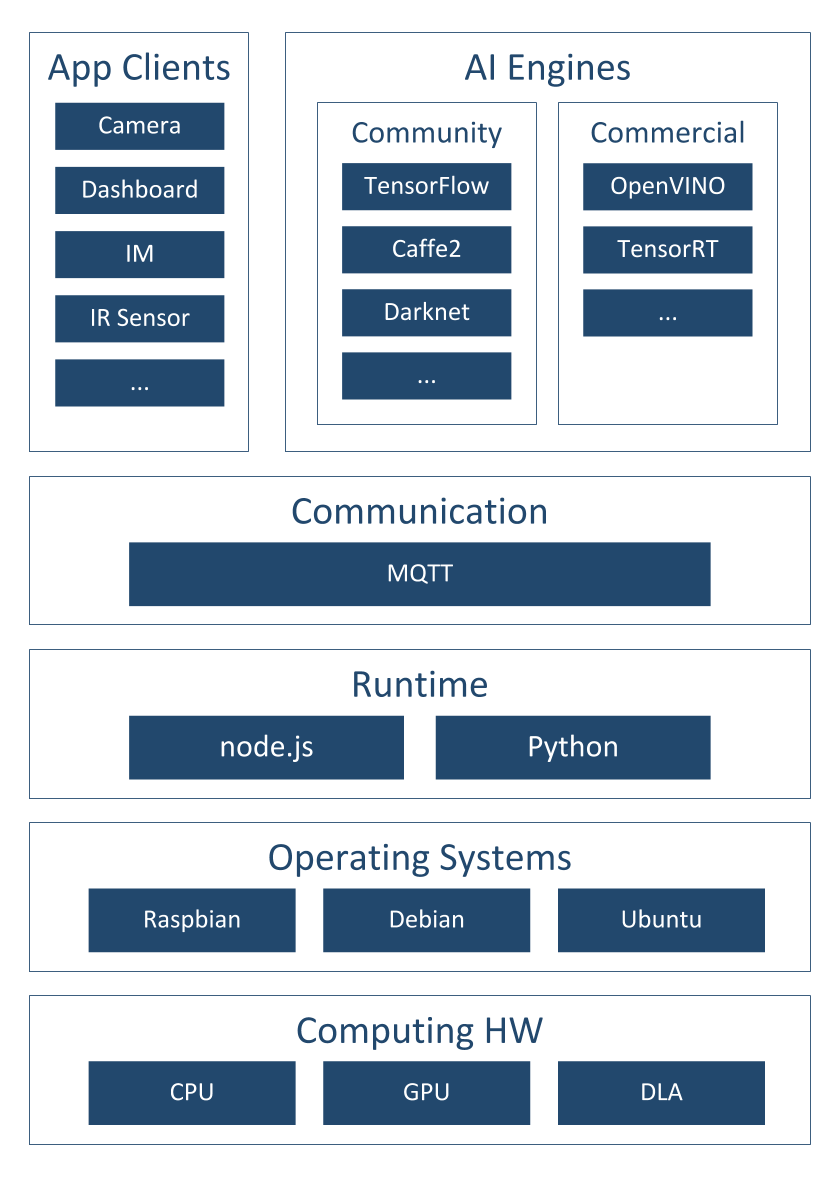

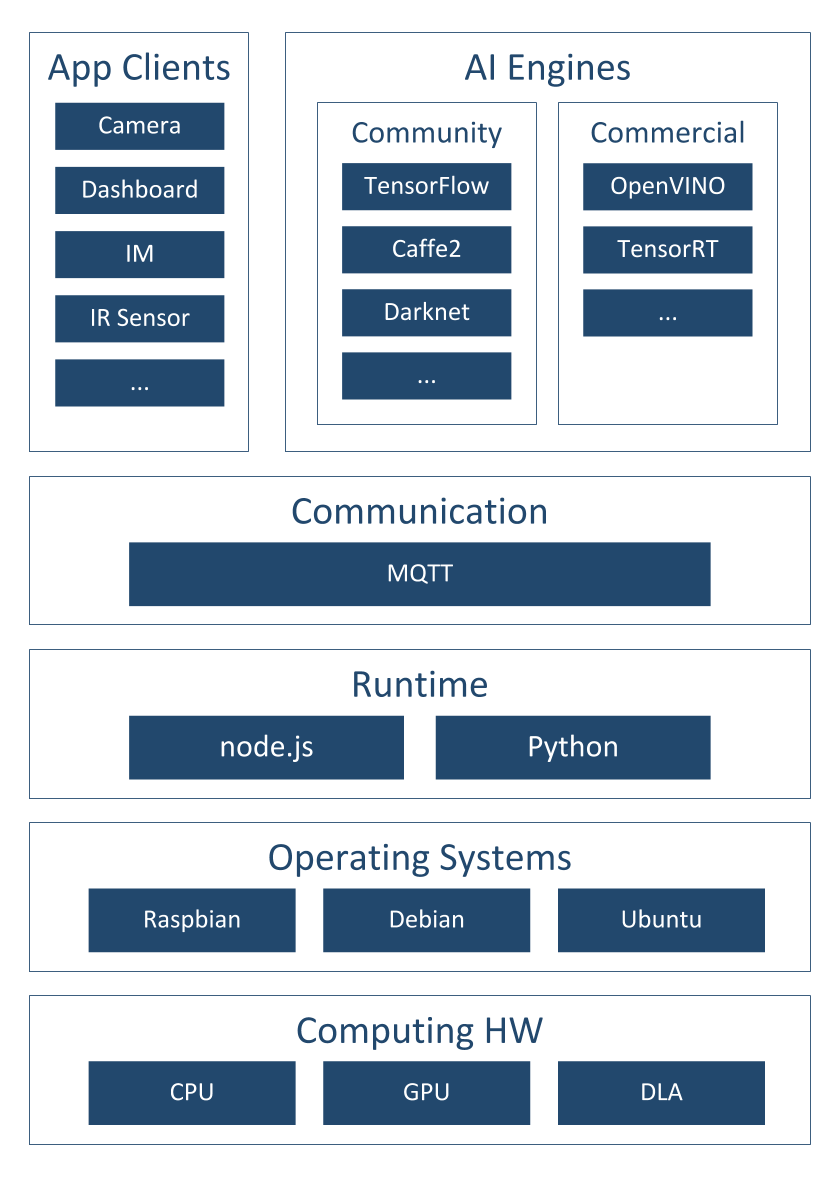

Figure 1: BerryNet architecture

Figure 1 shows the software architecture of the project, we use Node.js/Python, MQTT and an AI engine to analyze images or video frames with deep learning. So far, there are two default types of AI engines, the classification engine (with Inception v3 [[1]](https://arxiv.org/pdf/1512.00567.pdf) model) and the object detection engine (with TinyYOLO [[2]](https://pjreddie.com/media/files/papers/YOLO9000.pdf) model or MobileNet SSD [[3]](https://arxiv.org/pdf/1704.04861.pdf) model). Figure 2 shows the differences between classification and object detection.

Figure 2: Classification vs detection

One of the application of this intelligent gateway is to use the camera to monitor the place you care about. For example, Figure 3 shows the analyzed results from the camera hosted in the DT42 office. The frames were captured by the IP camera and they were submitted into the AI engine. The output from the AI engine will be shown in the dashboard. We are working on the Email and IM notification so you can get a notification when there is a dog coming into the meeting area with the next release.

Figure 3: Object detection result example

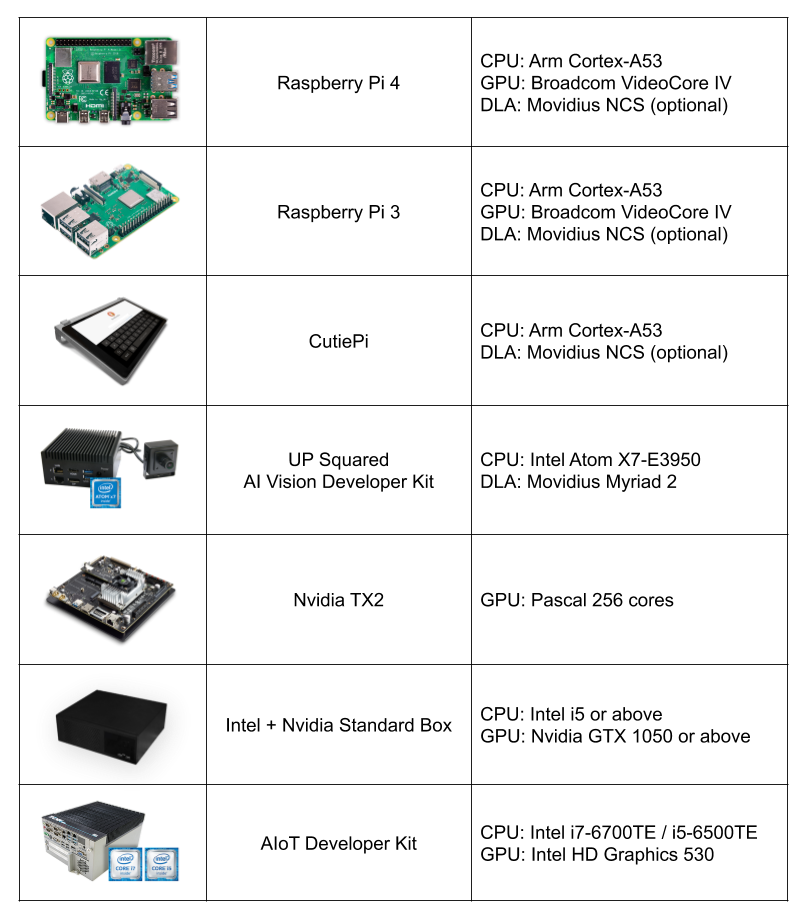

To bring easy and flexible edge AI experience to user, we keep expending support of the AI engines and the reference HWs.

Figure 4: Reference hardwares

# Installation

You can install BerryNet by using pre-built image or from source. Please refer to the [Wiki page](https://github.com/DT42/BerryNet/wiki/Installation) for the details.

We are pushing BerryNet into Debian repository, so you will be able to install by only typing one command in the future.

Here is the quick steps to install from source:

```

$ git clone https://github.com/DT42/BerryNet.git

$ cd BerryNet

$ ./configure

```

# Start and Stop BerryNet

BerryNet performs an AIoT application by connecting independent components together. Component types include but not limited to AI engine, I/O processor, data processor (algorithm), or data collector.

We recommend to manage BerryNet componetns by [supervisor](http://supervisord.org/), but you can also run BerryNet components manually. You can manage BerryNet via `supervisorctl`:

```

# Check status of BerryNet components

$ sudo supervisorctl status all

# Stop Camera client

$ sudo supervisorctl stop camera

# Restart all components

$ sudo supervisorctl restart all

# Show last stderr logs of camera client

$ sudo supervisorctl tail camera stderr

```

For more possibilities of supervisorctl, please refer to the [official tutorial](http://supervisord.org/running.html#running-supervisorctl).

The default application has three components:

* Camera client to provide input images

* Object detection engine to find type and position of the detected objects in an image

* Dashboard to display the detection results

You will learn how to configure or change the components in the [Configuration](#configuration) section.

# Dashboard: Freeboard

## Open Freeboard on RPi3 (with touch screen)

Freeboard is a web-based dashboard. Here are the steps to show the detection result iamge and text on Freeboard:

* 1: Enter `http://127.0.0.1:8080` in browser's URL bar, and press enter

* 2: [Download](https://raw.githubusercontent.com/DT42/BerryNet/master/config/dashboard-tflitedetector.json) the Freeboard configuration for default application, `dashboard-tflitedetector.json`

* 2: Click `LOAD FREEBOARD`, and select the newly downloaded `dashboard-tflitedetector.json`

* 3: Wait for seconds, you should see the inference result image and text on Freeboard

## Open Freeboard on another computer

Assuming that you have two devices:

* Device A with IP `192.168.1.42`, BerryNet default application runs on it

* Device B with IP `192.168.1.43`, you want to open Freeboard and see the detection result on it

Here are the steps:

* 1: Enter `http://192.168.1.42:8080` in browser's URL bar, and press enter

* 2: [Download](https://raw.githubusercontent.com/DT42/BerryNet/master/config/dashboard-tflitedetector.json) the Freeboard configuration for default application, `dashboard-tflitedetector.json`

* 3: Replace all the `localhost` to `192.168.1.42` in `dashboard-tflitedetector.json`

* 2: Click `LOAD FREEBOARD`, and select the newly downloaded `dashboard-tflitedetector.json`

* 3: Wait for seconds, you should see the inference result image and text on Freeboard

For more details about dashboard configuration (e.g. how to add widgets), please refer to [Freeboard project](https://github.com/Freeboard/freeboard).

# Enable Data Collector

You might want to store the snapshot and inference results for data analysis.

To run BerryNet data collector manually, you can run the command below:

```

$ bn_data_collector --topic-config --data-dirpath

```

The topic config indicates what MQTT topic the data collector will listen, and what handler will be triggered. Here is a topic config exmaple:

```

{

"berrynet/engine/tflitedetector/result": "self.update"

}

```

The inference result image and text will be saved into the indicated result directory.

# Configuration

The default supervisor config is at `/etc/supervisor/conf.d/berrynet-tflite.conf`. To write your own supervisor config, you can refer to [here](https://github.com/DT42/BerryNet/tree/master/config/supervisor/conf.d) for more example supervisor configs of BerryNet

## Camera Client

BerryNet camera client can run in two modes: stream or file. In stream mode, local camera (e.g. USB camera and RPi camera) and IP camera can be supported, and input frame rate (FPS) can be changed on demand (default is 1). In file mode, user can indicate filepath as input source.

To run camera client in stream mode:

```

$ bn_camera --fps 5

```

To run camera client in file mode:

```

$ bn_camera --mode file --filepath

```

# Use Your Data To Train

The original instruction of retraining YOLOv2 model see [github repository of darknet](https://github.com/AlexeyAB/darknet#how-to-train-to-detect-your-custom-objects)

In the current of BerryNet, TinyYolo is used instead of YOLOv2.

The major differences are:

1. Create file yolo-obj.cfg with the same content as in `tiny-yolo.cfg`

2. Download pre-trained weights of darknet reference model, `darknet.weights.12`, for the convolutional layers (6.1MB)

https://drive.google.com/drive/folders/0B-oZJEwmkAObMzAtc2QzZDhyVGM?usp=sharing

The rest parts are the same as retraining YOLO.

If you use [LabelMe](http://labelme.csail.mit.edu/Release3.0/) to annotate data, `utils/xmlTotxt.py` can help convert the xml format to the text format that darknet uses.

# Discussion

Please refer to the [Telegram Group](https://t.me/berrynetdev) or [Google Group](https://groups.google.com/a/dt42.io/d/forum/berrynet) for questions, suggestions, or any idea discussion.

�������������������������������������������������������������������������������������������������������������������������������������������������BerryNet-upstream-3.9.0/README.md.old���������������������������������������������������������������0000664�0000000�0000000�00000014024�13606321373�0017154�0����������������������������������������������������������������������������������������������������ustar�00root����������������������������root����������������������������0000000�0000000������������������������������������������������������������������������������������������������������������������������������������������������������������������������# BerryNet: Deep Learning Gateway on Raspberry Pi And Other Edge Devices

This project turns edge devices such as Raspberry Pi 3 into an intelligent gateway with deep learning running on it. No internet connection is required, everything is done locally on the edge device itself. Further, multiple edge devices can create a distributed AIoT network.

At DT42, we believe that bringing deep learning to edge devices is the trend towards the future. It not only saves costs of data transmission and storage but also makes devices able to respond according to the events shown in the images or videos without connecting to the cloud.

Figure 1: BerryNet architecture

Figure 1 shows the software architecture of the project, we use Node.js/Python, MQTT and an AI engine to analyze images or video frames with deep learning. So far, there are two default types of AI engines, the classification engine (with Inception v3 [[1]](https://arxiv.org/pdf/1512.00567.pdf) model) and the object detection engine (with TinyYOLO [[2]](https://pjreddie.com/media/files/papers/YOLO9000.pdf) model or MobileNet SSD [[3]](https://arxiv.org/pdf/1704.04861.pdf) model). Figure 2 shows the differences between classification and object detection.

Figure 2: Classification vs detection

One of the application of this intelligent gateway is to use the camera to monitor the place you care about. For example, Figure 3 shows the analyzed results from the camera hosted in the DT42 office. The frames were captured by the IP camera and they were submitted into the AI engine. The output from the AI engine will be shown in the dashboard. We are working on the Email and IM notification so you can get a notification when there is a dog coming into the meeting area with the next release.

Figure 3: Object detection result example

To bring easy and flexible edge AI experience to user, we keep expending support of the AI engines and the reference HWs.

Figure 4: Reference hardwares

# Installation

You can install BerryNet by using pre-built image or from source. Please refer to the [Wiki page](https://github.com/DT42/BerryNet/wiki/Installation) for the details.

We are pushing BerryNet into Debian repository, so you will be able to install by only typing one command in the future.

Here is the quick steps to install from source:

```

$ git clone https://github.com/DT42/BerryNet.git

$ cd BerryNet

$ ./configure

```

# Start and Stop BerryNet

BerryNet is managed by [systemd](https://freedesktop.org/wiki/Software/systemd/). You can manage BerryNet via `berrynet-manager`:

```

$ berrynet-manager [start | stop | status | log]

```

# Configuration

All the configurations are in `config.js`.

* Choose AI Engine.

* Two types of AI engines currently: object classifier and object detector.

* Configure IP camera's snapshot access interface.

* Please refer to [IP camera setup](doc/ipcam.md) for more details.

* MQTT topics.

# Dashboard

## Open dashboard on RPi3 (with touch screen)

Open browser and enter the URL:

`http://localhost:8080/index.html#source=dashboard.json`

The default dashboard configuration file will be loaded.

## Open dashboard on browser from any computer

Open browser and enter the URL:

`http://:8080/index.html#source=dashboard.json`

Click the data sources, and change MQTT broker's IP address to the gateway's IP.

For more details about dashboard configuration (e.g. how to add widgets), please refer to [freeboard project](https://github.com/Freeboard/freeboard).

# Provide Image Input

To capture an image via configured IP camera

```

$ mosquitto_pub -h localhost -t berrynet/event/camera -m snapshot_ipcam

```

To capture an image via board-connected camera (RPi camera or USB webcam)

```

$ mosquitto_pub -h localhost -t berrynet/event/camera -m snapshot_boardcam

```

To provide a local image

```

$ mosquitto_pub -h localhost -t berrynet/event/localImage -m

```

To start and stop streaming from board-connected camera

```

$ mosquitto_pub -h localhost -t berrynet/event/camera -m stream_boardcam_start

$ mosquitto_pub -h localhost -t berrynet/event/camera -m stream_boardcam_stop

```

To start and stop streaming from Nest IP camera

```

$ mosquitto_pub -h localhost -t berrynet/event/camera -m stream_nest_ipcam_start

$ mosquitto_pub -h localhost -t berrynet/event/camera -m stream_nest_ipcam_stop

```

# Enable Data Collector

You might want to store the snapshot and inference results for data analysis.

To enable data collector, you can set the storage directory path in config.js:

```

config.storageDirPath = '';

```

and restart BerryNet.

# Use Your Data To Train

The original instruction of retraining YOLOv2 model see [github repository of darknet](https://github.com/AlexeyAB/darknet#how-to-train-to-detect-your-custom-objects)

In the current of BerryNet, TinyYolo is used instead of YOLOv2.

The major differences are:

1. Create file yolo-obj.cfg with the same content as in `tiny-yolo.cfg`

2. Download pre-trained weights of darknet reference model, `darknet.weights.12`, for the convolutional layers (6.1MB)

https://drive.google.com/drive/folders/0B-oZJEwmkAObMzAtc2QzZDhyVGM?usp=sharing

The rest parts are the same as retraining YOLO.

If you use [LabelMe](http://labelme.csail.mit.edu/Release3.0/) to annotate data, `utils/xmlTotxt.py` can help convert the xml format to the text format that darknet uses.

# Discussion

Please refer to the [Telegram Group](https://t.me/berrynetdev) or [Google Group](https://groups.google.com/a/dt42.io/d/forum/berrynet) for questions, suggestions, or any idea discussion.

������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������BerryNet-upstream-3.9.0/berrynet-manager������������������������������������������������������������0000775�0000000�0000000�00000003412�13606321373�0020307�0����������������������������������������������������������������������������������������������������ustar�00root����������������������������root����������������������������0000000�0000000������������������������������������������������������������������������������������������������������������������������������������������������������������������������#! /bin/sh

#

# Copyright 2017 DT42

#

# This file is part of BerryNet.

#

# BerryNet is free software: you can redistribute it and/or modify

# it under the terms of the GNU General Public License as published by

# the Free Software Foundation, either version 3 of the License, or

# (at your option) any later version.

#

# BerryNet is distributed in the hope that it will be useful,

# but WITHOUT ANY WARRANTY; without even the implied warranty of

# MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the

# GNU General Public License for more details.

#

# You should have received a copy of the GNU General Public License

# along with BerryNet. If not, see .

help() {

echo "Usage: $0 "

echo " start : launch dlsystem"

echo " stop : terminate dlsystem"

echo " status : check the state of all services"

echo " log : dump logfiles of all services"

exit 1

}

if [ $# -lt 1 ]; then

help

fi

case $1 in

start | stop | status)

sudo systemctl $1 \

detection_fast_server.service \

agent.service \

broker.service \

dashboard.service \

localimg.service \

camera.service \

journal.service \

data_collector.service

;;

log)

sudo journalctl -x --no-pager -u detection_fast_server.service

sudo journalctl -x --no-pager -u agent.service

sudo journalctl -x --no-pager -u broker.service

sudo journalctl -x --no-pager -u dashboard.service

sudo journalctl -x --no-pager -u localimg.service

sudo journalctl -x --no-pager -u camera.service

sudo journalctl -x --no-pager -u journal.service

sudo journalctl -x --no-pager -u data_collector.service

;;

*)

help

esac

������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������BerryNet-upstream-3.9.0/berrynet/�������������������������������������������������������������������0000775�0000000�0000000�00000000000�13606321373�0016751�5����������������������������������������������������������������������������������������������������ustar�00root����������������������������root����������������������������0000000�0000000������������������������������������������������������������������������������������������������������������������������������������������������������������������������BerryNet-upstream-3.9.0/berrynet/__init__.py��������������������������������������������������������0000664�0000000�0000000�00000000514�13606321373�0021062�0����������������������������������������������������������������������������������������������������ustar�00root����������������������������root����������������������������0000000�0000000������������������������������������������������������������������������������������������������������������������������������������������������������������������������import os

from logzero import setup_logger

# Save log file at different place to prevent permission error.

if os.geteuid() == 0: # root

LOGGING_FLLEPATH='/tmp/berrynet.log'

else:

LOGGING_FLLEPATH='{}/.cache/berrynet.log'.format(os.getenv('HOME'))

logger = setup_logger(name='berrynet-logger', logfile=LOGGING_FLLEPATH)

������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������BerryNet-upstream-3.9.0/berrynet/client/������������������������������������������������������������0000775�0000000�0000000�00000000000�13606321373�0020227�5����������������������������������������������������������������������������������������������������ustar�00root����������������������������root����������������������������0000000�0000000������������������������������������������������������������������������������������������������������������������������������������������������������������������������BerryNet-upstream-3.9.0/berrynet/client/__init__.py�������������������������������������������������0000664�0000000�0000000�00000000000�13606321373�0022326�0����������������������������������������������������������������������������������������������������ustar�00root����������������������������root����������������������������0000000�0000000������������������������������������������������������������������������������������������������������������������������������������������������������������������������BerryNet-upstream-3.9.0/berrynet/client/camera.py���������������������������������������������������0000664�0000000�0000000�00000013055�13606321373�0022035�0����������������������������������������������������������������������������������������������������ustar�00root����������������������������root����������������������������0000000�0000000������������������������������������������������������������������������������������������������������������������������������������������������������������������������#!/usr/bin/python3

#

# Copyright 2018 DT42

#

# This file is part of BerryNet.

#

# BerryNet is free software: you can redistribute it and/or modify

# it under the terms of the GNU General Public License as published by

# the Free Software Foundation, either version 3 of the License, or

# (at your option) any later version.

#

# BerryNet is distributed in the hope that it will be useful,

# but WITHOUT ANY WARRANTY; without even the implied warranty of

# MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the

# GNU General Public License for more details.

#

# You should have received a copy of the GNU General Public License

# along with BerryNet. If not, see .

import argparse

import logging

import time

from datetime import datetime

import cv2

from berrynet import logger

from berrynet.comm import Communicator

from berrynet.comm import payload

def parse_args():

ap = argparse.ArgumentParser()

ap.add_argument(

'--mode',

default='stream',

help='Camera creates frame(s) from stream or file. (default: stream)'

)

ap.add_argument(

'--stream-src',

type=str,

default='0',

help=('Camera stream source. '

'It can be device node ID or RTSP URL. '

'(default: 0)')

)

ap.add_argument(

'--fps',

type=float,

default=1,

help='Frame per second in streaming mode. (default: 1)'

)

ap.add_argument(

'--filepath',

default='',

help='Input image path in file mode. (default: empty)'

)

ap.add_argument(

'--broker-ip',

default='localhost',

help='MQTT broker IP.'

)

ap.add_argument(

'--broker-port',

default=1883,

type=int,

help='MQTT broker port.'

)

ap.add_argument('--display',

action='store_true',

help=('Open a window and display the sent out frames. '

'This argument is only effective in stream mode.')

)

ap.add_argument('--debug',

action='store_true',

help='Debug mode toggle'

)

return vars(ap.parse_args())

def main():

args = parse_args()

if args['debug']:

logger.setLevel(logging.DEBUG)

else:

logger.setLevel(logging.INFO)

comm_config = {

'subscribe': {},

'broker': {

'address': args['broker_ip'],

'port': args['broker_port']

}

}

comm = Communicator(comm_config, debug=True)

duration = lambda t: (datetime.now() - t).microseconds / 1000

if args['mode'] == 'stream':

counter = 0

# Check input stream source

if args['stream_src'].isdigit():

# source is a physically connected camera

stream_source = '/dev/video{}'.format(int(args['stream_src']))

capture = cv2.VideoCapture(int(args['stream_src']))

else:

# source is an IP camera

stream_source = args['stream_src']

capture = cv2.VideoCapture(args['stream_src'])

cam_fps = capture.get(cv2.CAP_PROP_FPS)

if cam_fps > 30 or cam_fps < 1:

logger.warn('Camera FPS is {} (>30 or <1). Set it to 30.'.format(cam_fps))

cam_fps = 30

out_fps = args['fps']

interval = int(cam_fps / out_fps)

# warmup

#t_warmup_start = time.time()

#t_warmup_now = time.time()

#warmup_counter = 0

#while t_warmup_now - t_warmup_start < 1:

# capture.read()

# warmup_counter += 1

# t_warmup_now = time.time()

logger.debug('===== VideoCapture Information =====')

logger.debug('Stream Source: {}'.format(stream_source))

logger.debug('Camera FPS: {}'.format(cam_fps))

logger.debug('Output FPS: {}'.format(out_fps))

logger.debug('Interval: {}'.format(interval))

#logger.debug('Warmup Counter: {}'.format(warmup_counter))

logger.debug('====================================')

while True:

status, im = capture.read()

if (status is False):

logger.warn('ERROR: Failure happened when reading frame')

counter += 1

if counter == interval:

logger.debug('Drop frames: {}'.format(counter-1))

counter = 0

# Open a window and display the ready-to-send frame.

# This is useful for development and debugging.

if args['display']:

cv2.imshow('Frame', im)

cv2.waitKey(1)

t = datetime.now()

retval, jpg_bytes = cv2.imencode('.jpg', im)

mqtt_payload = payload.serialize_jpg(jpg_bytes)

comm.send('berrynet/data/rgbimage', mqtt_payload)

logger.debug('send: {} ms'.format(duration(t)))

else:

pass

elif args['mode'] == 'file':

# Prepare MQTT payload

im = cv2.imread(args['filepath'])

retval, jpg_bytes = cv2.imencode('.jpg', im)

t = datetime.now()

mqtt_payload = payload.serialize_jpg(jpg_bytes)

logger.debug('payload: {} ms'.format(duration(t)))

logger.debug('payload size: {}'.format(len(mqtt_payload)))

# Client publishes payload

t = datetime.now()

comm.send('berrynet/data/rgbimage', mqtt_payload)

logger.debug('mqtt.publish: {} ms'.format(duration(t)))

logger.debug('publish at {}'.format(datetime.now().isoformat()))

else:

logger.error('User assigned unknown mode {}'.format(args['mode']))

if __name__ == '__main__':

main()

�����������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������BerryNet-upstream-3.9.0/berrynet/client/dashboard.py������������������������������������������������0000664�0000000�0000000�00000005245�13606321373�0022536�0����������������������������������������������������������������������������������������������������ustar�00root����������������������������root����������������������������0000000�0000000������������������������������������������������������������������������������������������������������������������������������������������������������������������������# Copyright 2018 DT42

#

# This file is part of BerryNet.

#

# BerryNet is free software: you can redistribute it and/or modify

# it under the terms of the GNU General Public License as published by

# the Free Software Foundation, either version 3 of the License, or

# (at your option) any later version.

#

# BerryNet is distributed in the hope that it will be useful,

# but WITHOUT ANY WARRANTY; without even the implied warranty of

# MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the

# GNU General Public License for more details.

#