pax_global_header�����������������������������������������������������������������������������������0000666�0000000�0000000�00000000064�14616753232�0014523�g����������������������������������������������������������������������������������������������������ustar�00root����������������������������root����������������������������0000000�0000000������������������������������������������������������������������������������������������������������������������������������������������������������������������������52 comment=fb3491d1eadfca44e7dac057533648cd4f1117b1

����������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������cotengrust-0.1.3/�����������������������������������������������������������������������������������0000775�0000000�0000000�00000000000�14616753232�0013721�5����������������������������������������������������������������������������������������������������ustar�00root����������������������������root����������������������������0000000�0000000������������������������������������������������������������������������������������������������������������������������������������������������������������������������cotengrust-0.1.3/.github/���������������������������������������������������������������������������0000775�0000000�0000000�00000000000�14616753232�0015261�5����������������������������������������������������������������������������������������������������ustar�00root����������������������������root����������������������������0000000�0000000������������������������������������������������������������������������������������������������������������������������������������������������������������������������cotengrust-0.1.3/.github/workflows/�����������������������������������������������������������������0000775�0000000�0000000�00000000000�14616753232�0017316�5����������������������������������������������������������������������������������������������������ustar�00root����������������������������root����������������������������0000000�0000000������������������������������������������������������������������������������������������������������������������������������������������������������������������������cotengrust-0.1.3/.github/workflows/CI.yml�����������������������������������������������������������0000775�0000000�0000000�00000006704�14616753232�0020346�0����������������������������������������������������������������������������������������������������ustar�00root����������������������������root����������������������������0000000�0000000������������������������������������������������������������������������������������������������������������������������������������������������������������������������# This file is autogenerated by maturin v1.5.1

# To update, run

#

# maturin generate-ci github

#

name: CI

on:

push:

branches:

- main

- master

tags:

- '*'

pull_request:

workflow_dispatch:

permissions:

contents: read

jobs:

linux:

runs-on: ${{ matrix.platform.runner }}

strategy:

matrix:

platform:

- runner: ubuntu-latest

target: x86_64

- runner: ubuntu-latest

target: x86

- runner: ubuntu-latest

target: aarch64

- runner: ubuntu-latest

target: armv7

- runner: ubuntu-latest

target: s390x

- runner: ubuntu-latest

target: ppc64le

steps:

- uses: actions/checkout@v4

- uses: actions/setup-python@v5

with:

python-version: '3.10'

- name: Build wheels

uses: PyO3/maturin-action@v1

with:

target: ${{ matrix.platform.target }}

args: --release --out dist --find-interpreter

sccache: 'true'

manylinux: auto

- name: Upload wheels

uses: actions/upload-artifact@v4

with:

name: wheels-linux-${{ matrix.platform.target }}

path: dist

windows:

runs-on: ${{ matrix.platform.runner }}

strategy:

matrix:

platform:

- runner: windows-latest

target: x64

- runner: windows-latest

target: x86

steps:

- uses: actions/checkout@v4

- uses: actions/setup-python@v5

with:

python-version: '3.10'

architecture: ${{ matrix.platform.target }}

- name: Build wheels

uses: PyO3/maturin-action@v1

with:

target: ${{ matrix.platform.target }}

args: --release --out dist --find-interpreter

sccache: 'true'

- name: Upload wheels

uses: actions/upload-artifact@v4

with:

name: wheels-windows-${{ matrix.platform.target }}

path: dist

macos:

runs-on: ${{ matrix.platform.runner }}

strategy:

matrix:

platform:

- runner: macos-latest

target: x86_64

- runner: macos-14

target: aarch64

steps:

- uses: actions/checkout@v4

- uses: actions/setup-python@v5

with:

python-version: '3.10'

- name: Build wheels

uses: PyO3/maturin-action@v1

with:

target: ${{ matrix.platform.target }}

args: --release --out dist --find-interpreter

sccache: 'true'

- name: Upload wheels

uses: actions/upload-artifact@v4

with:

name: wheels-macos-${{ matrix.platform.target }}

path: dist

sdist:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- name: Build sdist

uses: PyO3/maturin-action@v1

with:

command: sdist

args: --out dist

- name: Upload sdist

uses: actions/upload-artifact@v4

with:

name: wheels-sdist

path: dist

release:

name: Release

runs-on: ubuntu-latest

if: startsWith(github.ref, 'refs/tags/')

needs: [linux, windows, macos, sdist]

steps:

- uses: actions/download-artifact@v4

- name: Publish to PyPI

uses: PyO3/maturin-action@v1

env:

MATURIN_PYPI_TOKEN: ${{ secrets.PYPI_API_TOKEN }}

with:

command: upload

args: --non-interactive --skip-existing wheels-*/*

������������������������������������������������������������cotengrust-0.1.3/.gitignore�������������������������������������������������������������������������0000775�0000000�0000000�00000001255�14616753232�0015717�0����������������������������������������������������������������������������������������������������ustar�00root����������������������������root����������������������������0000000�0000000������������������������������������������������������������������������������������������������������������������������������������������������������������������������/target

# Byte-compiled / optimized / DLL files

__pycache__/

.pytest_cache/

*.py[cod]

# C extensions

*.so

# Distribution / packaging

.Python

.venv/

env/

bin/

build/

develop-eggs/

dist/

eggs/

lib/

lib64/

parts/

sdist/

var/

include/

man/

venv/

*.egg-info/

.installed.cfg

*.egg

# Installer logs

pip-log.txt

pip-delete-this-directory.txt

pip-selfcheck.json

# Unit test / coverage reports

htmlcov/

.tox/

.coverage

.cache

nosetests.xml

coverage.xml

# Translations

*.mo

# Mr Developer

.mr.developer.cfg

.project

.pydevproject

# Rope

.ropeproject

# Django stuff:

*.log

*.pot

.DS_Store

# Sphinx documentation

docs/_build/

# PyCharm

.idea/

# VSCode

.vscode/

# Pyenv

.python-version���������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������cotengrust-0.1.3/Cargo.lock�������������������������������������������������������������������������0000775�0000000�0000000�00000024147�14616753232�0015641�0����������������������������������������������������������������������������������������������������ustar�00root����������������������������root����������������������������0000000�0000000������������������������������������������������������������������������������������������������������������������������������������������������������������������������# This file is automatically @generated by Cargo.

# It is not intended for manual editing.

version = 3

[[package]]

name = "autocfg"

version = "1.1.0"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "d468802bab17cbc0cc575e9b053f41e72aa36bfa6b7f55e3529ffa43161b97fa"

[[package]]

name = "bit-set"

version = "0.5.3"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "0700ddab506f33b20a03b13996eccd309a48e5ff77d0d95926aa0210fb4e95f1"

dependencies = [

"bit-vec",

]

[[package]]

name = "bit-vec"

version = "0.6.3"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "349f9b6a179ed607305526ca489b34ad0a41aed5f7980fa90eb03160b69598fb"

[[package]]

name = "bitflags"

version = "1.3.2"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "bef38d45163c2f1dde094a7dfd33ccf595c92905c8f8f4fdc18d06fb1037718a"

[[package]]

name = "cfg-if"

version = "1.0.0"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "baf1de4339761588bc0619e3cbc0120ee582ebb74b53b4efbf79117bd2da40fd"

[[package]]

name = "cotengrust"

version = "0.1.3"

dependencies = [

"bit-set",

"ordered-float",

"pyo3",

"rand",

"rustc-hash",

]

[[package]]

name = "getrandom"

version = "0.2.10"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "be4136b2a15dd319360be1c07d9933517ccf0be8f16bf62a3bee4f0d618df427"

dependencies = [

"cfg-if",

"libc",

"wasi",

]

[[package]]

name = "heck"

version = "0.4.1"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "95505c38b4572b2d910cecb0281560f54b440a19336cbbcb27bf6ce6adc6f5a8"

[[package]]

name = "indoc"

version = "2.0.4"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "1e186cfbae8084e513daff4240b4797e342f988cecda4fb6c939150f96315fd8"

[[package]]

name = "libc"

version = "0.2.147"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "b4668fb0ea861c1df094127ac5f1da3409a82116a4ba74fca2e58ef927159bb3"

[[package]]

name = "lock_api"

version = "0.4.10"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "c1cc9717a20b1bb222f333e6a92fd32f7d8a18ddc5a3191a11af45dcbf4dcd16"

dependencies = [

"autocfg",

"scopeguard",

]

[[package]]

name = "memoffset"

version = "0.9.0"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "5a634b1c61a95585bd15607c6ab0c4e5b226e695ff2800ba0cdccddf208c406c"

dependencies = [

"autocfg",

]

[[package]]

name = "num-traits"

version = "0.2.16"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "f30b0abd723be7e2ffca1272140fac1a2f084c77ec3e123c192b66af1ee9e6c2"

dependencies = [

"autocfg",

]

[[package]]

name = "once_cell"

version = "1.18.0"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "dd8b5dd2ae5ed71462c540258bedcb51965123ad7e7ccf4b9a8cafaa4a63576d"

[[package]]

name = "ordered-float"

version = "4.2.0"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "a76df7075c7d4d01fdcb46c912dd17fba5b60c78ea480b475f2b6ab6f666584e"

dependencies = [

"num-traits",

]

[[package]]

name = "parking_lot"

version = "0.12.1"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "3742b2c103b9f06bc9fff0a37ff4912935851bee6d36f3c02bcc755bcfec228f"

dependencies = [

"lock_api",

"parking_lot_core",

]

[[package]]

name = "parking_lot_core"

version = "0.9.8"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "93f00c865fe7cabf650081affecd3871070f26767e7b2070a3ffae14c654b447"

dependencies = [

"cfg-if",

"libc",

"redox_syscall",

"smallvec",

"windows-targets",

]

[[package]]

name = "portable-atomic"

version = "1.6.0"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "7170ef9988bc169ba16dd36a7fa041e5c4cbeb6a35b76d4c03daded371eae7c0"

[[package]]

name = "ppv-lite86"

version = "0.2.17"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "5b40af805b3121feab8a3c29f04d8ad262fa8e0561883e7653e024ae4479e6de"

[[package]]

name = "proc-macro2"

version = "1.0.66"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "18fb31db3f9bddb2ea821cde30a9f70117e3f119938b5ee630b7403aa6e2ead9"

dependencies = [

"unicode-ident",

]

[[package]]

name = "pyo3"

version = "0.21.1"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "a7a8b1990bd018761768d5e608a13df8bd1ac5f678456e0f301bb93e5f3ea16b"

dependencies = [

"cfg-if",

"indoc",

"libc",

"memoffset",

"parking_lot",

"portable-atomic",

"pyo3-build-config",

"pyo3-ffi",

"pyo3-macros",

"unindent",

]

[[package]]

name = "pyo3-build-config"

version = "0.21.1"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "650dca34d463b6cdbdb02b1d71bfd6eb6b6816afc708faebb3bac1380ff4aef7"

dependencies = [

"once_cell",

"target-lexicon",

]

[[package]]

name = "pyo3-ffi"

version = "0.21.1"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "09a7da8fc04a8a2084909b59f29e1b8474decac98b951d77b80b26dc45f046ad"

dependencies = [

"libc",

"pyo3-build-config",

]

[[package]]

name = "pyo3-macros"

version = "0.21.1"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "4b8a199fce11ebb28e3569387228836ea98110e43a804a530a9fd83ade36d513"

dependencies = [

"proc-macro2",

"pyo3-macros-backend",

"quote",

"syn",

]

[[package]]

name = "pyo3-macros-backend"

version = "0.21.1"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "93fbbfd7eb553d10036513cb122b888dcd362a945a00b06c165f2ab480d4cc3b"

dependencies = [

"heck",

"proc-macro2",

"pyo3-build-config",

"quote",

"syn",

]

[[package]]

name = "quote"

version = "1.0.33"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "5267fca4496028628a95160fc423a33e8b2e6af8a5302579e322e4b520293cae"

dependencies = [

"proc-macro2",

]

[[package]]

name = "rand"

version = "0.8.5"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "34af8d1a0e25924bc5b7c43c079c942339d8f0a8b57c39049bef581b46327404"

dependencies = [

"libc",

"rand_chacha",

"rand_core",

]

[[package]]

name = "rand_chacha"

version = "0.3.1"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "e6c10a63a0fa32252be49d21e7709d4d4baf8d231c2dbce1eaa8141b9b127d88"

dependencies = [

"ppv-lite86",

"rand_core",

]

[[package]]

name = "rand_core"

version = "0.6.4"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "ec0be4795e2f6a28069bec0b5ff3e2ac9bafc99e6a9a7dc3547996c5c816922c"

dependencies = [

"getrandom",

]

[[package]]

name = "redox_syscall"

version = "0.3.5"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "567664f262709473930a4bf9e51bf2ebf3348f2e748ccc50dea20646858f8f29"

dependencies = [

"bitflags",

]

[[package]]

name = "rustc-hash"

version = "1.1.0"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "08d43f7aa6b08d49f382cde6a7982047c3426db949b1424bc4b7ec9ae12c6ce2"

[[package]]

name = "scopeguard"

version = "1.2.0"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "94143f37725109f92c262ed2cf5e59bce7498c01bcc1502d7b9afe439a4e9f49"

[[package]]

name = "smallvec"

version = "1.11.0"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "62bb4feee49fdd9f707ef802e22365a35de4b7b299de4763d44bfea899442ff9"

[[package]]

name = "syn"

version = "2.0.32"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "239814284fd6f1a4ffe4ca893952cdd93c224b6a1571c9a9eadd670295c0c9e2"

dependencies = [

"proc-macro2",

"quote",

"unicode-ident",

]

[[package]]

name = "target-lexicon"

version = "0.12.11"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "9d0e916b1148c8e263850e1ebcbd046f333e0683c724876bb0da63ea4373dc8a"

[[package]]

name = "unicode-ident"

version = "1.0.11"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "301abaae475aa91687eb82514b328ab47a211a533026cb25fc3e519b86adfc3c"

[[package]]

name = "unindent"

version = "0.2.3"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "c7de7d73e1754487cb58364ee906a499937a0dfabd86bcb980fa99ec8c8fa2ce"

[[package]]

name = "wasi"

version = "0.11.0+wasi-snapshot-preview1"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "9c8d87e72b64a3b4db28d11ce29237c246188f4f51057d65a7eab63b7987e423"

[[package]]

name = "windows-targets"

version = "0.48.5"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "9a2fa6e2155d7247be68c096456083145c183cbbbc2764150dda45a87197940c"

dependencies = [

"windows_aarch64_gnullvm",

"windows_aarch64_msvc",

"windows_i686_gnu",

"windows_i686_msvc",

"windows_x86_64_gnu",

"windows_x86_64_gnullvm",

"windows_x86_64_msvc",

]

[[package]]

name = "windows_aarch64_gnullvm"

version = "0.48.5"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "2b38e32f0abccf9987a4e3079dfb67dcd799fb61361e53e2882c3cbaf0d905d8"

[[package]]

name = "windows_aarch64_msvc"

version = "0.48.5"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "dc35310971f3b2dbbf3f0690a219f40e2d9afcf64f9ab7cc1be722937c26b4bc"

[[package]]

name = "windows_i686_gnu"

version = "0.48.5"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "a75915e7def60c94dcef72200b9a8e58e5091744960da64ec734a6c6e9b3743e"

[[package]]

name = "windows_i686_msvc"

version = "0.48.5"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "8f55c233f70c4b27f66c523580f78f1004e8b5a8b659e05a4eb49d4166cca406"

[[package]]

name = "windows_x86_64_gnu"

version = "0.48.5"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "53d40abd2583d23e4718fddf1ebec84dbff8381c07cae67ff7768bbf19c6718e"

[[package]]

name = "windows_x86_64_gnullvm"

version = "0.48.5"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "0b7b52767868a23d5bab768e390dc5f5c55825b6d30b86c844ff2dc7414044cc"

[[package]]

name = "windows_x86_64_msvc"

version = "0.48.5"

source = "registry+https://github.com/rust-lang/crates.io-index"

checksum = "ed94fce61571a4006852b7389a063ab983c02eb1bb37b47f8272ce92d06d9538"

�������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������cotengrust-0.1.3/Cargo.toml�������������������������������������������������������������������������0000775�0000000�0000000�00000000567�14616753232�0015664�0����������������������������������������������������������������������������������������������������ustar�00root����������������������������root����������������������������0000000�0000000������������������������������������������������������������������������������������������������������������������������������������������������������������������������[package]

name = "cotengrust"

version = "0.1.3"

edition = "2021"

# See more keys and their definitions at https://doc.rust-lang.org/cargo/reference/manifest.html

[lib]

name = "cotengrust"

crate-type = ["cdylib"]

[dependencies]

bit-set = "0.5"

ordered-float = "4.2"

pyo3 = "0.21"

rand = "0.8"

rustc-hash = "1.1"

[profile.release]

codegen-units = 1

lto = true

opt-level = 3

�����������������������������������������������������������������������������������������������������������������������������������������cotengrust-0.1.3/LICENSE����������������������������������������������������������������������������0000664�0000000�0000000�00000103333�14616753232�0014731�0����������������������������������������������������������������������������������������������������ustar�00root����������������������������root����������������������������0000000�0000000������������������������������������������������������������������������������������������������������������������������������������������������������������������������ GNU AFFERO GENERAL PUBLIC LICENSE

Version 3, 19 November 2007

Copyright (C) 2007 Free Software Foundation, Inc.

Everyone is permitted to copy and distribute verbatim copies

of this license document, but changing it is not allowed.

Preamble

The GNU Affero General Public License is a free, copyleft license for

software and other kinds of works, specifically designed to ensure

cooperation with the community in the case of network server software.

The licenses for most software and other practical works are designed

to take away your freedom to share and change the works. By contrast,

our General Public Licenses are intended to guarantee your freedom to

share and change all versions of a program--to make sure it remains free

software for all its users.

When we speak of free software, we are referring to freedom, not

price. Our General Public Licenses are designed to make sure that you

have the freedom to distribute copies of free software (and charge for

them if you wish), that you receive source code or can get it if you

want it, that you can change the software or use pieces of it in new

free programs, and that you know you can do these things.

Developers that use our General Public Licenses protect your rights

with two steps: (1) assert copyright on the software, and (2) offer

you this License which gives you legal permission to copy, distribute

and/or modify the software.

A secondary benefit of defending all users' freedom is that

improvements made in alternate versions of the program, if they

receive widespread use, become available for other developers to

incorporate. Many developers of free software are heartened and

encouraged by the resulting cooperation. However, in the case of

software used on network servers, this result may fail to come about.

The GNU General Public License permits making a modified version and

letting the public access it on a server without ever releasing its

source code to the public.

The GNU Affero General Public License is designed specifically to

ensure that, in such cases, the modified source code becomes available

to the community. It requires the operator of a network server to

provide the source code of the modified version running there to the

users of that server. Therefore, public use of a modified version, on

a publicly accessible server, gives the public access to the source

code of the modified version.

An older license, called the Affero General Public License and

published by Affero, was designed to accomplish similar goals. This is

a different license, not a version of the Affero GPL, but Affero has

released a new version of the Affero GPL which permits relicensing under

this license.

The precise terms and conditions for copying, distribution and

modification follow.

TERMS AND CONDITIONS

0. Definitions.

"This License" refers to version 3 of the GNU Affero General Public License.

"Copyright" also means copyright-like laws that apply to other kinds of

works, such as semiconductor masks.

"The Program" refers to any copyrightable work licensed under this

License. Each licensee is addressed as "you". "Licensees" and

"recipients" may be individuals or organizations.

To "modify" a work means to copy from or adapt all or part of the work

in a fashion requiring copyright permission, other than the making of an

exact copy. The resulting work is called a "modified version" of the

earlier work or a work "based on" the earlier work.

A "covered work" means either the unmodified Program or a work based

on the Program.

To "propagate" a work means to do anything with it that, without

permission, would make you directly or secondarily liable for

infringement under applicable copyright law, except executing it on a

computer or modifying a private copy. Propagation includes copying,

distribution (with or without modification), making available to the

public, and in some countries other activities as well.

To "convey" a work means any kind of propagation that enables other

parties to make or receive copies. Mere interaction with a user through

a computer network, with no transfer of a copy, is not conveying.

An interactive user interface displays "Appropriate Legal Notices"

to the extent that it includes a convenient and prominently visible

feature that (1) displays an appropriate copyright notice, and (2)

tells the user that there is no warranty for the work (except to the

extent that warranties are provided), that licensees may convey the

work under this License, and how to view a copy of this License. If

the interface presents a list of user commands or options, such as a

menu, a prominent item in the list meets this criterion.

1. Source Code.

The "source code" for a work means the preferred form of the work

for making modifications to it. "Object code" means any non-source

form of a work.

A "Standard Interface" means an interface that either is an official

standard defined by a recognized standards body, or, in the case of

interfaces specified for a particular programming language, one that

is widely used among developers working in that language.

The "System Libraries" of an executable work include anything, other

than the work as a whole, that (a) is included in the normal form of

packaging a Major Component, but which is not part of that Major

Component, and (b) serves only to enable use of the work with that

Major Component, or to implement a Standard Interface for which an

implementation is available to the public in source code form. A

"Major Component", in this context, means a major essential component

(kernel, window system, and so on) of the specific operating system

(if any) on which the executable work runs, or a compiler used to

produce the work, or an object code interpreter used to run it.

The "Corresponding Source" for a work in object code form means all

the source code needed to generate, install, and (for an executable

work) run the object code and to modify the work, including scripts to

control those activities. However, it does not include the work's

System Libraries, or general-purpose tools or generally available free

programs which are used unmodified in performing those activities but

which are not part of the work. For example, Corresponding Source

includes interface definition files associated with source files for

the work, and the source code for shared libraries and dynamically

linked subprograms that the work is specifically designed to require,

such as by intimate data communication or control flow between those

subprograms and other parts of the work.

The Corresponding Source need not include anything that users

can regenerate automatically from other parts of the Corresponding

Source.

The Corresponding Source for a work in source code form is that

same work.

2. Basic Permissions.

All rights granted under this License are granted for the term of

copyright on the Program, and are irrevocable provided the stated

conditions are met. This License explicitly affirms your unlimited

permission to run the unmodified Program. The output from running a

covered work is covered by this License only if the output, given its

content, constitutes a covered work. This License acknowledges your

rights of fair use or other equivalent, as provided by copyright law.

You may make, run and propagate covered works that you do not

convey, without conditions so long as your license otherwise remains

in force. You may convey covered works to others for the sole purpose

of having them make modifications exclusively for you, or provide you

with facilities for running those works, provided that you comply with

the terms of this License in conveying all material for which you do

not control copyright. Those thus making or running the covered works

for you must do so exclusively on your behalf, under your direction

and control, on terms that prohibit them from making any copies of

your copyrighted material outside their relationship with you.

Conveying under any other circumstances is permitted solely under

the conditions stated below. Sublicensing is not allowed; section 10

makes it unnecessary.

3. Protecting Users' Legal Rights From Anti-Circumvention Law.

No covered work shall be deemed part of an effective technological

measure under any applicable law fulfilling obligations under article

11 of the WIPO copyright treaty adopted on 20 December 1996, or

similar laws prohibiting or restricting circumvention of such

measures.

When you convey a covered work, you waive any legal power to forbid

circumvention of technological measures to the extent such circumvention

is effected by exercising rights under this License with respect to

the covered work, and you disclaim any intention to limit operation or

modification of the work as a means of enforcing, against the work's

users, your or third parties' legal rights to forbid circumvention of

technological measures.

4. Conveying Verbatim Copies.

You may convey verbatim copies of the Program's source code as you

receive it, in any medium, provided that you conspicuously and

appropriately publish on each copy an appropriate copyright notice;

keep intact all notices stating that this License and any

non-permissive terms added in accord with section 7 apply to the code;

keep intact all notices of the absence of any warranty; and give all

recipients a copy of this License along with the Program.

You may charge any price or no price for each copy that you convey,

and you may offer support or warranty protection for a fee.

5. Conveying Modified Source Versions.

You may convey a work based on the Program, or the modifications to

produce it from the Program, in the form of source code under the

terms of section 4, provided that you also meet all of these conditions:

a) The work must carry prominent notices stating that you modified

it, and giving a relevant date.

b) The work must carry prominent notices stating that it is

released under this License and any conditions added under section

7. This requirement modifies the requirement in section 4 to

"keep intact all notices".

c) You must license the entire work, as a whole, under this

License to anyone who comes into possession of a copy. This

License will therefore apply, along with any applicable section 7

additional terms, to the whole of the work, and all its parts,

regardless of how they are packaged. This License gives no

permission to license the work in any other way, but it does not

invalidate such permission if you have separately received it.

d) If the work has interactive user interfaces, each must display

Appropriate Legal Notices; however, if the Program has interactive

interfaces that do not display Appropriate Legal Notices, your

work need not make them do so.

A compilation of a covered work with other separate and independent

works, which are not by their nature extensions of the covered work,

and which are not combined with it such as to form a larger program,

in or on a volume of a storage or distribution medium, is called an

"aggregate" if the compilation and its resulting copyright are not

used to limit the access or legal rights of the compilation's users

beyond what the individual works permit. Inclusion of a covered work

in an aggregate does not cause this License to apply to the other

parts of the aggregate.

6. Conveying Non-Source Forms.

You may convey a covered work in object code form under the terms

of sections 4 and 5, provided that you also convey the

machine-readable Corresponding Source under the terms of this License,

in one of these ways:

a) Convey the object code in, or embodied in, a physical product

(including a physical distribution medium), accompanied by the

Corresponding Source fixed on a durable physical medium

customarily used for software interchange.

b) Convey the object code in, or embodied in, a physical product

(including a physical distribution medium), accompanied by a

written offer, valid for at least three years and valid for as

long as you offer spare parts or customer support for that product

model, to give anyone who possesses the object code either (1) a

copy of the Corresponding Source for all the software in the

product that is covered by this License, on a durable physical

medium customarily used for software interchange, for a price no

more than your reasonable cost of physically performing this

conveying of source, or (2) access to copy the

Corresponding Source from a network server at no charge.

c) Convey individual copies of the object code with a copy of the

written offer to provide the Corresponding Source. This

alternative is allowed only occasionally and noncommercially, and

only if you received the object code with such an offer, in accord

with subsection 6b.

d) Convey the object code by offering access from a designated

place (gratis or for a charge), and offer equivalent access to the

Corresponding Source in the same way through the same place at no

further charge. You need not require recipients to copy the

Corresponding Source along with the object code. If the place to

copy the object code is a network server, the Corresponding Source

may be on a different server (operated by you or a third party)

that supports equivalent copying facilities, provided you maintain

clear directions next to the object code saying where to find the

Corresponding Source. Regardless of what server hosts the

Corresponding Source, you remain obligated to ensure that it is

available for as long as needed to satisfy these requirements.

e) Convey the object code using peer-to-peer transmission, provided

you inform other peers where the object code and Corresponding

Source of the work are being offered to the general public at no

charge under subsection 6d.

A separable portion of the object code, whose source code is excluded

from the Corresponding Source as a System Library, need not be

included in conveying the object code work.

A "User Product" is either (1) a "consumer product", which means any

tangible personal property which is normally used for personal, family,

or household purposes, or (2) anything designed or sold for incorporation

into a dwelling. In determining whether a product is a consumer product,

doubtful cases shall be resolved in favor of coverage. For a particular

product received by a particular user, "normally used" refers to a

typical or common use of that class of product, regardless of the status

of the particular user or of the way in which the particular user

actually uses, or expects or is expected to use, the product. A product

is a consumer product regardless of whether the product has substantial

commercial, industrial or non-consumer uses, unless such uses represent

the only significant mode of use of the product.

"Installation Information" for a User Product means any methods,

procedures, authorization keys, or other information required to install

and execute modified versions of a covered work in that User Product from

a modified version of its Corresponding Source. The information must

suffice to ensure that the continued functioning of the modified object

code is in no case prevented or interfered with solely because

modification has been made.

If you convey an object code work under this section in, or with, or

specifically for use in, a User Product, and the conveying occurs as

part of a transaction in which the right of possession and use of the

User Product is transferred to the recipient in perpetuity or for a

fixed term (regardless of how the transaction is characterized), the

Corresponding Source conveyed under this section must be accompanied

by the Installation Information. But this requirement does not apply

if neither you nor any third party retains the ability to install

modified object code on the User Product (for example, the work has

been installed in ROM).

The requirement to provide Installation Information does not include a

requirement to continue to provide support service, warranty, or updates

for a work that has been modified or installed by the recipient, or for

the User Product in which it has been modified or installed. Access to a

network may be denied when the modification itself materially and

adversely affects the operation of the network or violates the rules and

protocols for communication across the network.

Corresponding Source conveyed, and Installation Information provided,

in accord with this section must be in a format that is publicly

documented (and with an implementation available to the public in

source code form), and must require no special password or key for

unpacking, reading or copying.

7. Additional Terms.

"Additional permissions" are terms that supplement the terms of this

License by making exceptions from one or more of its conditions.

Additional permissions that are applicable to the entire Program shall

be treated as though they were included in this License, to the extent

that they are valid under applicable law. If additional permissions

apply only to part of the Program, that part may be used separately

under those permissions, but the entire Program remains governed by

this License without regard to the additional permissions.

When you convey a copy of a covered work, you may at your option

remove any additional permissions from that copy, or from any part of

it. (Additional permissions may be written to require their own

removal in certain cases when you modify the work.) You may place

additional permissions on material, added by you to a covered work,

for which you have or can give appropriate copyright permission.

Notwithstanding any other provision of this License, for material you

add to a covered work, you may (if authorized by the copyright holders of

that material) supplement the terms of this License with terms:

a) Disclaiming warranty or limiting liability differently from the

terms of sections 15 and 16 of this License; or

b) Requiring preservation of specified reasonable legal notices or

author attributions in that material or in the Appropriate Legal

Notices displayed by works containing it; or

c) Prohibiting misrepresentation of the origin of that material, or

requiring that modified versions of such material be marked in

reasonable ways as different from the original version; or

d) Limiting the use for publicity purposes of names of licensors or

authors of the material; or

e) Declining to grant rights under trademark law for use of some

trade names, trademarks, or service marks; or

f) Requiring indemnification of licensors and authors of that

material by anyone who conveys the material (or modified versions of

it) with contractual assumptions of liability to the recipient, for

any liability that these contractual assumptions directly impose on

those licensors and authors.

All other non-permissive additional terms are considered "further

restrictions" within the meaning of section 10. If the Program as you

received it, or any part of it, contains a notice stating that it is

governed by this License along with a term that is a further

restriction, you may remove that term. If a license document contains

a further restriction but permits relicensing or conveying under this

License, you may add to a covered work material governed by the terms

of that license document, provided that the further restriction does

not survive such relicensing or conveying.

If you add terms to a covered work in accord with this section, you

must place, in the relevant source files, a statement of the

additional terms that apply to those files, or a notice indicating

where to find the applicable terms.

Additional terms, permissive or non-permissive, may be stated in the

form of a separately written license, or stated as exceptions;

the above requirements apply either way.

8. Termination.

You may not propagate or modify a covered work except as expressly

provided under this License. Any attempt otherwise to propagate or

modify it is void, and will automatically terminate your rights under

this License (including any patent licenses granted under the third

paragraph of section 11).

However, if you cease all violation of this License, then your

license from a particular copyright holder is reinstated (a)

provisionally, unless and until the copyright holder explicitly and

finally terminates your license, and (b) permanently, if the copyright

holder fails to notify you of the violation by some reasonable means

prior to 60 days after the cessation.

Moreover, your license from a particular copyright holder is

reinstated permanently if the copyright holder notifies you of the

violation by some reasonable means, this is the first time you have

received notice of violation of this License (for any work) from that

copyright holder, and you cure the violation prior to 30 days after

your receipt of the notice.

Termination of your rights under this section does not terminate the

licenses of parties who have received copies or rights from you under

this License. If your rights have been terminated and not permanently

reinstated, you do not qualify to receive new licenses for the same

material under section 10.

9. Acceptance Not Required for Having Copies.

You are not required to accept this License in order to receive or

run a copy of the Program. Ancillary propagation of a covered work

occurring solely as a consequence of using peer-to-peer transmission

to receive a copy likewise does not require acceptance. However,

nothing other than this License grants you permission to propagate or

modify any covered work. These actions infringe copyright if you do

not accept this License. Therefore, by modifying or propagating a

covered work, you indicate your acceptance of this License to do so.

10. Automatic Licensing of Downstream Recipients.

Each time you convey a covered work, the recipient automatically

receives a license from the original licensors, to run, modify and

propagate that work, subject to this License. You are not responsible

for enforcing compliance by third parties with this License.

An "entity transaction" is a transaction transferring control of an

organization, or substantially all assets of one, or subdividing an

organization, or merging organizations. If propagation of a covered

work results from an entity transaction, each party to that

transaction who receives a copy of the work also receives whatever

licenses to the work the party's predecessor in interest had or could

give under the previous paragraph, plus a right to possession of the

Corresponding Source of the work from the predecessor in interest, if

the predecessor has it or can get it with reasonable efforts.

You may not impose any further restrictions on the exercise of the

rights granted or affirmed under this License. For example, you may

not impose a license fee, royalty, or other charge for exercise of

rights granted under this License, and you may not initiate litigation

(including a cross-claim or counterclaim in a lawsuit) alleging that

any patent claim is infringed by making, using, selling, offering for

sale, or importing the Program or any portion of it.

11. Patents.

A "contributor" is a copyright holder who authorizes use under this

License of the Program or a work on which the Program is based. The

work thus licensed is called the contributor's "contributor version".

A contributor's "essential patent claims" are all patent claims

owned or controlled by the contributor, whether already acquired or

hereafter acquired, that would be infringed by some manner, permitted

by this License, of making, using, or selling its contributor version,

but do not include claims that would be infringed only as a

consequence of further modification of the contributor version. For

purposes of this definition, "control" includes the right to grant

patent sublicenses in a manner consistent with the requirements of

this License.

Each contributor grants you a non-exclusive, worldwide, royalty-free

patent license under the contributor's essential patent claims, to

make, use, sell, offer for sale, import and otherwise run, modify and

propagate the contents of its contributor version.

In the following three paragraphs, a "patent license" is any express

agreement or commitment, however denominated, not to enforce a patent

(such as an express permission to practice a patent or covenant not to

sue for patent infringement). To "grant" such a patent license to a

party means to make such an agreement or commitment not to enforce a

patent against the party.

If you convey a covered work, knowingly relying on a patent license,

and the Corresponding Source of the work is not available for anyone

to copy, free of charge and under the terms of this License, through a

publicly available network server or other readily accessible means,

then you must either (1) cause the Corresponding Source to be so

available, or (2) arrange to deprive yourself of the benefit of the

patent license for this particular work, or (3) arrange, in a manner

consistent with the requirements of this License, to extend the patent

license to downstream recipients. "Knowingly relying" means you have

actual knowledge that, but for the patent license, your conveying the

covered work in a country, or your recipient's use of the covered work

in a country, would infringe one or more identifiable patents in that

country that you have reason to believe are valid.

If, pursuant to or in connection with a single transaction or

arrangement, you convey, or propagate by procuring conveyance of, a

covered work, and grant a patent license to some of the parties

receiving the covered work authorizing them to use, propagate, modify

or convey a specific copy of the covered work, then the patent license

you grant is automatically extended to all recipients of the covered

work and works based on it.

A patent license is "discriminatory" if it does not include within

the scope of its coverage, prohibits the exercise of, or is

conditioned on the non-exercise of one or more of the rights that are

specifically granted under this License. You may not convey a covered

work if you are a party to an arrangement with a third party that is

in the business of distributing software, under which you make payment

to the third party based on the extent of your activity of conveying

the work, and under which the third party grants, to any of the

parties who would receive the covered work from you, a discriminatory

patent license (a) in connection with copies of the covered work

conveyed by you (or copies made from those copies), or (b) primarily

for and in connection with specific products or compilations that

contain the covered work, unless you entered into that arrangement,

or that patent license was granted, prior to 28 March 2007.

Nothing in this License shall be construed as excluding or limiting

any implied license or other defenses to infringement that may

otherwise be available to you under applicable patent law.

12. No Surrender of Others' Freedom.

If conditions are imposed on you (whether by court order, agreement or

otherwise) that contradict the conditions of this License, they do not

excuse you from the conditions of this License. If you cannot convey a

covered work so as to satisfy simultaneously your obligations under this

License and any other pertinent obligations, then as a consequence you may

not convey it at all. For example, if you agree to terms that obligate you

to collect a royalty for further conveying from those to whom you convey

the Program, the only way you could satisfy both those terms and this

License would be to refrain entirely from conveying the Program.

13. Remote Network Interaction; Use with the GNU General Public License.

Notwithstanding any other provision of this License, if you modify the

Program, your modified version must prominently offer all users

interacting with it remotely through a computer network (if your version

supports such interaction) an opportunity to receive the Corresponding

Source of your version by providing access to the Corresponding Source

from a network server at no charge, through some standard or customary

means of facilitating copying of software. This Corresponding Source

shall include the Corresponding Source for any work covered by version 3

of the GNU General Public License that is incorporated pursuant to the

following paragraph.

Notwithstanding any other provision of this License, you have

permission to link or combine any covered work with a work licensed

under version 3 of the GNU General Public License into a single

combined work, and to convey the resulting work. The terms of this

License will continue to apply to the part which is the covered work,

but the work with which it is combined will remain governed by version

3 of the GNU General Public License.

14. Revised Versions of this License.

The Free Software Foundation may publish revised and/or new versions of

the GNU Affero General Public License from time to time. Such new versions

will be similar in spirit to the present version, but may differ in detail to

address new problems or concerns.

Each version is given a distinguishing version number. If the

Program specifies that a certain numbered version of the GNU Affero General

Public License "or any later version" applies to it, you have the

option of following the terms and conditions either of that numbered

version or of any later version published by the Free Software

Foundation. If the Program does not specify a version number of the

GNU Affero General Public License, you may choose any version ever published

by the Free Software Foundation.

If the Program specifies that a proxy can decide which future

versions of the GNU Affero General Public License can be used, that proxy's

public statement of acceptance of a version permanently authorizes you

to choose that version for the Program.

Later license versions may give you additional or different

permissions. However, no additional obligations are imposed on any

author or copyright holder as a result of your choosing to follow a

later version.

15. Disclaimer of Warranty.

THERE IS NO WARRANTY FOR THE PROGRAM, TO THE EXTENT PERMITTED BY

APPLICABLE LAW. EXCEPT WHEN OTHERWISE STATED IN WRITING THE COPYRIGHT

HOLDERS AND/OR OTHER PARTIES PROVIDE THE PROGRAM "AS IS" WITHOUT WARRANTY

OF ANY KIND, EITHER EXPRESSED OR IMPLIED, INCLUDING, BUT NOT LIMITED TO,

THE IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR

PURPOSE. THE ENTIRE RISK AS TO THE QUALITY AND PERFORMANCE OF THE PROGRAM

IS WITH YOU. SHOULD THE PROGRAM PROVE DEFECTIVE, YOU ASSUME THE COST OF

ALL NECESSARY SERVICING, REPAIR OR CORRECTION.

16. Limitation of Liability.

IN NO EVENT UNLESS REQUIRED BY APPLICABLE LAW OR AGREED TO IN WRITING

WILL ANY COPYRIGHT HOLDER, OR ANY OTHER PARTY WHO MODIFIES AND/OR CONVEYS

THE PROGRAM AS PERMITTED ABOVE, BE LIABLE TO YOU FOR DAMAGES, INCLUDING ANY

GENERAL, SPECIAL, INCIDENTAL OR CONSEQUENTIAL DAMAGES ARISING OUT OF THE

USE OR INABILITY TO USE THE PROGRAM (INCLUDING BUT NOT LIMITED TO LOSS OF

DATA OR DATA BEING RENDERED INACCURATE OR LOSSES SUSTAINED BY YOU OR THIRD

PARTIES OR A FAILURE OF THE PROGRAM TO OPERATE WITH ANY OTHER PROGRAMS),

EVEN IF SUCH HOLDER OR OTHER PARTY HAS BEEN ADVISED OF THE POSSIBILITY OF

SUCH DAMAGES.

17. Interpretation of Sections 15 and 16.

If the disclaimer of warranty and limitation of liability provided

above cannot be given local legal effect according to their terms,

reviewing courts shall apply local law that most closely approximates

an absolute waiver of all civil liability in connection with the

Program, unless a warranty or assumption of liability accompanies a

copy of the Program in return for a fee.

END OF TERMS AND CONDITIONS

How to Apply These Terms to Your New Programs

If you develop a new program, and you want it to be of the greatest

possible use to the public, the best way to achieve this is to make it

free software which everyone can redistribute and change under these terms.

To do so, attach the following notices to the program. It is safest

to attach them to the start of each source file to most effectively

state the exclusion of warranty; and each file should have at least

the "copyright" line and a pointer to where the full notice is found.

Copyright (C)

This program is free software: you can redistribute it and/or modify

it under the terms of the GNU Affero General Public License as published

by the Free Software Foundation, either version 3 of the License, or

(at your option) any later version.

This program is distributed in the hope that it will be useful,

but WITHOUT ANY WARRANTY; without even the implied warranty of

MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the

GNU Affero General Public License for more details.

You should have received a copy of the GNU Affero General Public License

along with this program. If not, see .

Also add information on how to contact you by electronic and paper mail.

If your software can interact with users remotely through a computer

network, you should also make sure that it provides a way for users to

get its source. For example, if your program is a web application, its

interface could display a "Source" link that leads users to an archive

of the code. There are many ways you could offer source, and different

solutions will be better for different programs; see section 13 for the

specific requirements.

You should also get your employer (if you work as a programmer) or school,

if any, to sign a "copyright disclaimer" for the program, if necessary.

For more information on this, and how to apply and follow the GNU AGPL, see

.

�����������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������cotengrust-0.1.3/README.md��������������������������������������������������������������������������0000664�0000000�0000000�00000026031�14616753232�0015202�0����������������������������������������������������������������������������������������������������ustar�00root����������������������������root����������������������������0000000�0000000������������������������������������������������������������������������������������������������������������������������������������������������������������������������# cotengrust

`cotengrust` provides fast rust implementations of contraction ordering

primitives for tensor networks or einsum expressions. The two main functions

are:

- `optimize_optimal(inputs, output, size_dict, **kwargs)`

- `optimize_greedy(inputs, output, size_dict, **kwargs)`

The optimal algorithm is an optimized version of the `opt_einsum` 'dp'

path - itself an implementation of https://arxiv.org/abs/1304.6112.

There is also a variant of the greedy algorithm, which runs `ntrials` of greedy,

randomized paths and computes and reports the flops cost (log10) simultaneously:

- `optimize_random_greedy_track_flops(inputs, output, size_dict, **kwargs)`

## Installation

`cotengrust` is available for most platforms from

[PyPI](https://pypi.org/project/cotengrust/):

```bash

pip install cotengrust

```

or if you want to develop locally (which requires [pyo3](https://github.com/PyO3/pyo3)

and [maturin](https://github.com/PyO3/maturin)):

```bash

git clone https://github.com/jcmgray/cotengrust.git

cd cotengrust

maturin develop --release

```

(the release flag is very important for assessing performance!).

## Usage

If `cotengrust` is installed, then by default `cotengra` will use it for its

greedy, random-greedy, and optimal subroutines, notably subtree

reconfiguration. You can also call the routines directly:

```python

import cotengra as ctg

import cotengrust as ctgr

# specify an 8x8 square lattice contraction

inputs, output, shapes, size_dict = ctg.utils.lattice_equation([8, 8])

# find the optimal 'combo' contraction path

%%time

path = ctgr.optimize_optimal(inputs, output, size_dict, minimize='combo')

# CPU times: user 13.7 s, sys: 83.4 ms, total: 13.7 s

# Wall time: 13.7 s

# construct a contraction tree for further introspection

tree = ctg.ContractionTree.from_path(

inputs, output, size_dict, path=path

)

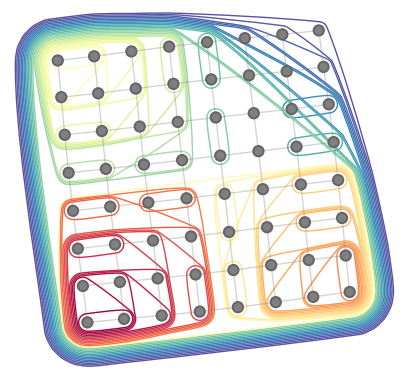

tree.plot_rubberband()

```

## API

The optimize functions follow the api of the python implementations in `cotengra.pathfinders.path_basic.py`.

```python

def optimize_optimal(

inputs,

output,

size_dict,

minimize='flops',

cost_cap=2,

search_outer=False,

simplify=True,

use_ssa=False,

):

"""Find an optimal contraction ordering.

Parameters

----------

inputs : Sequence[Sequence[str]]

The indices of each input tensor.

output : Sequence[str]

The indices of the output tensor.

size_dict : dict[str, int]

The size of each index.

minimize : str, optional

The cost function to minimize. The options are:

- "flops": minimize with respect to total operation count only

(also known as contraction cost)

- "size": minimize with respect to maximum intermediate size only

(also known as contraction width)

- 'write' : minimize the sum of all tensor sizes, i.e. memory written

- 'combo' or 'combo={factor}` : minimize the sum of

FLOPS + factor * WRITE, with a default factor of 64.

- 'limit' or 'limit={factor}` : minimize the sum of

MAX(FLOPS, alpha * WRITE) for each individual contraction, with a

default factor of 64.

'combo' is generally a good default in term of practical hardware

performance, where both memory bandwidth and compute are limited.

cost_cap : float, optional

The maximum cost of a contraction to initially consider. This acts like

a sieve and is doubled at each iteration until the optimal path can

be found, but supplying an accurate guess can speed up the algorithm.

search_outer : bool, optional

If True, consider outer product contractions. This is much slower but

theoretically might be required to find the true optimal 'flops'

ordering. In practical settings (i.e. with minimize='combo'), outer

products should not be required.

simplify : bool, optional

Whether to perform simplifications before optimizing. These are:

- ignore any indices that appear in all terms

- combine any repeated indices within a single term

- reduce any non-output indices that only appear on a single term

- combine any scalar terms

- combine any tensors with matching indices (hadamard products)

Such simpifications may be required in the general case for the proper

functioning of the core optimization, but may be skipped if the input

indices are already in a simplified form.

use_ssa : bool, optional

Whether to return the contraction path in 'single static assignment'

(SSA) format (i.e. as if each intermediate is appended to the list of

inputs, without removals). This can be quicker and easier to work with

than the 'linear recycled' format that `numpy` and `opt_einsum` use.

Returns

-------

path : list[list[int]]

The contraction path, given as a sequence of pairs of node indices. It

may also have single term contractions if `simplify=True`.

"""

...

def optimize_greedy(

inputs,

output,

size_dict,

costmod=1.0,

temperature=0.0,

simplify=True,

use_ssa=False,

):

"""Find a contraction path using a (randomizable) greedy algorithm.

Parameters

----------

inputs : Sequence[Sequence[str]]

The indices of each input tensor.

output : Sequence[str]

The indices of the output tensor.

size_dict : dict[str, int]

A dictionary mapping indices to their dimension.

costmod : float, optional

When assessing local greedy scores how much to weight the size of the

tensors removed compared to the size of the tensor added::

score = size_ab / costmod - (size_a + size_b) * costmod

This can be a useful hyper-parameter to tune.

temperature : float, optional

When asessing local greedy scores, how much to randomly perturb the

score. This is implemented as::

score -> sign(score) * log(|score|) - temperature * gumbel()

which implements boltzmann sampling.

simplify : bool, optional

Whether to perform simplifications before optimizing. These are:

- ignore any indices that appear in all terms

- combine any repeated indices within a single term

- reduce any non-output indices that only appear on a single term

- combine any scalar terms

- combine any tensors with matching indices (hadamard products)

Such simpifications may be required in the general case for the proper

functioning of the core optimization, but may be skipped if the input

indices are already in a simplified form.

use_ssa : bool, optional

Whether to return the contraction path in 'single static assignment'

(SSA) format (i.e. as if each intermediate is appended to the list of

inputs, without removals). This can be quicker and easier to work with

than the 'linear recycled' format that `numpy` and `opt_einsum` use.

Returns

-------

path : list[list[int]]

The contraction path, given as a sequence of pairs of node indices. It

may also have single term contractions if `simplify=True`.

"""

def optimize_simplify(

inputs,

output,

size_dict,

use_ssa=False,

):

"""Find the (partial) contracton path for simplifiactions only.

Parameters

----------

inputs : Sequence[Sequence[str]]

The indices of each input tensor.

output : Sequence[str]

The indices of the output tensor.

size_dict : dict[str, int]

A dictionary mapping indices to their dimension.

use_ssa : bool, optional

Whether to return the contraction path in 'single static assignment'

(SSA) format (i.e. as if each intermediate is appended to the list of

inputs, without removals). This can be quicker and easier to work with

than the 'linear recycled' format that `numpy` and `opt_einsum` use.

Returns

-------

path : list[list[int]]

The contraction path, given as a sequence of pairs of node indices. It

may also have single term contractions.

"""

...

def optimize_random_greedy_track_flops(

inputs,

output,

size_dict,

ntrials=1,

costmod=(0.1, 4.0),

temperature=(0.001, 1.0),

seed=None,

simplify=True,

use_ssa=False,

):

"""Perform a batch of random greedy optimizations, simulteneously tracking

the best contraction path in terms of flops, so as to avoid constructing a

separate contraction tree.

Parameters

----------

inputs : tuple[tuple[str]]

The indices of each input tensor.

output : tuple[str]

The indices of the output tensor.

size_dict : dict[str, int]

A dictionary mapping indices to their dimension.

ntrials : int, optional

The number of random greedy trials to perform. The default is 1.

costmod : (float, float), optional

When assessing local greedy scores how much to weight the size of the

tensors removed compared to the size of the tensor added::

score = size_ab / costmod - (size_a + size_b) * costmod

It is sampled uniformly from the given range.

temperature : (float, float), optional

When asessing local greedy scores, how much to randomly perturb the

score. This is implemented as::

score -> sign(score) * log(|score|) - temperature * gumbel()

which implements boltzmann sampling. It is sampled log-uniformly from

the given range.

seed : int, optional

The seed for the random number generator.

simplify : bool, optional

Whether to perform simplifications before optimizing. These are:

- ignore any indices that appear in all terms

- combine any repeated indices within a single term

- reduce any non-output indices that only appear on a single term

- combine any scalar terms

- combine any tensors with matching indices (hadamard products)

Such simpifications may be required in the general case for the proper

functioning of the core optimization, but may be skipped if the input

indices are already in a simplified form.

use_ssa : bool, optional

Whether to return the contraction path in 'single static assignment'

(SSA) format (i.e. as if each intermediate is appended to the list of

inputs, without removals). This can be quicker and easier to work with

than the 'linear recycled' format that `numpy` and `opt_einsum` use.

Returns

-------

path : list[list[int]]

The best contraction path, given as a sequence of pairs of node

indices.

flops : float

The flops (/ contraction cost / number of multiplications), of the best

contraction path, given log10.

"""

...

def ssa_to_linear(ssa_path, n=None):

"""Convert a SSA path to linear format."""

...

def find_subgraphs(inputs, output, size_dict,):

"""Find all disconnected subgraphs of a specified contraction."""

...

```

�������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������cotengrust-0.1.3/pyproject.toml���������������������������������������������������������������������0000775�0000000�0000000�00000001116�14616753232�0016637�0����������������������������������������������������������������������������������������������������ustar�00root����������������������������root����������������������������0000000�0000000������������������������������������������������������������������������������������������������������������������������������������������������������������������������[project]

name = "cotengrust"

version = "0.1.3"

description = "Fast contraction ordering primitives for tensor networks."

readme = "README.md"

requires-python = ">=3.8"

classifiers = [

"Programming Language :: Rust",

"Programming Language :: Python :: Implementation :: CPython",

"Programming Language :: Python :: Implementation :: PyPy",

]

license = { file = "LICENSE" }

authors = [

{name = "Johnnie Gray", email = "johnniemcgray@gmail.com"}

]

[build-system]

requires = ["maturin>=1.0,<2.0"]

build-backend = "maturin"

[tool.maturin]

features = ["pyo3/extension-module"]

��������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������������cotengrust-0.1.3/src/�������������������������������������������������������������������������������0000775�0000000�0000000�00000000000�14616753232�0014510�5����������������������������������������������������������������������������������������������������ustar�00root����������������������������root����������������������������0000000�0000000������������������������������������������������������������������������������������������������������������������������������������������������������������������������cotengrust-0.1.3/src/lib.rs�������������������������������������������������������������������������0000775�0000000�0000000�00000111704�14616753232�0015633�0����������������������������������������������������������������������������������������������������ustar�00root����������������������������root����������������������������0000000�0000000������������������������������������������������������������������������������������������������������������������������������������������������������������������������use bit_set::BitSet;

use ordered_float::OrderedFloat;

use pyo3::prelude::*;

use rand::Rng;

use rand::SeedableRng;

use rustc_hash::FxHashMap;

use std::collections::{BTreeSet, BinaryHeap, HashSet};

use std::f32;

use FxHashMap as Dict;

type Ix = u16;

type Count = u8;

type Legs = Vec<(Ix, Count)>;

type Node = u16;

type Score = f32;

type SSAPath = Vec>;

type GreedyScore = OrderedFloat;

// types for optimal optimization

type ONode = u32;

type Subgraph = BitSet;

type BitPath = Vec<(Subgraph, Subgraph)>;

type SubContraction = (Legs, Score, BitPath);

/// helper struct to build contractions from bottom up

#[derive(Clone)]

struct ContractionProcessor {

nodes: Dict,

edges: Dict>,

appearances: Vec,

sizes: Vec,

ssa: Node,

ssa_path: SSAPath,

track_flops: bool,

flops: Score,

flops_limit: Score,

}

/// given log(x) and log(y) compute log(x + y), without exponentiating both

fn logadd(lx: Score, ly: Score) -> Score {

let max_val = lx.max(ly);

max_val + f32::ln_1p(f32::exp(-f32::abs(lx - ly)))

}

/// given log(x) and log(y) compute log(x - y), without exponentiating both,

/// if (x - y) is negative, return -log(x - y).

fn logsub(lx: f32, ly: f32) -> f32 {

if lx < ly {

-ly - f32::ln_1p(-f32::exp(lx - ly))

} else {

lx + f32::ln_1p(-f32::exp(ly - lx))

}

}

fn compute_legs(ilegs: &Legs, jlegs: &Legs, appearances: &Vec) -> Legs {

let mut ip = 0;

let mut jp = 0;

let ni = ilegs.len();

let nj = jlegs.len();

let mut new_legs: Legs = Vec::with_capacity(ilegs.len() + jlegs.len());

loop {

if ip == ni {

new_legs.extend(jlegs[jp..].iter());

break;

}

if jp == nj {

new_legs.extend(ilegs[ip..].iter());

break;

}

let (ix, ic) = ilegs[ip];

let (jx, jc) = jlegs[jp];

if ix < jx {

// index only appears in ilegs

new_legs.push((ix, ic));

ip += 1;

} else if ix > jx {

// index only appears in jlegs

new_legs.push((jx, jc));

jp += 1;

} else {

// index appears in both

let new_count = ic + jc;

if new_count != appearances[ix as usize] {

// not last appearance -> kept index contributes to new size

new_legs.push((ix, new_count));

}

ip += 1;

jp += 1;

}

}

new_legs

}

fn compute_size(legs: &Legs, sizes: &Vec) -> Score {

legs.iter().map(|&(ix, _)| sizes[ix as usize]).sum()

}

fn compute_flops(ilegs: &Legs, jlegs: &Legs, sizes: &Vec) -> Score {

let mut flops: Score = 0.0;

let mut seen: HashSet = HashSet::with_capacity(ilegs.len());

for &(ix, _) in ilegs {

seen.insert(ix);

flops += sizes[ix as usize];

}

for (ix, _) in jlegs {

if !seen.contains(ix) {

flops += sizes[*ix as usize];

}

}

flops

}

fn is_simplifiable(legs: &Legs, appearances: &Vec) -> bool {

let mut prev_ix = Node::MAX;

for &(ix, ix_count) in legs {

if (ix == prev_ix) || (ix_count == appearances[ix as usize]) {

return true;

}

prev_ix = ix;

}

false

}

fn compute_simplified(legs: &Legs, appearances: &Vec) -> Legs {

if legs.len() == 0 {

return legs.clone();

}

let mut new_legs: Legs = Vec::with_capacity(legs.len());

let (mut cur_ix, mut cur_cnt) = legs[0];

for &(ix, ix_cnt) in legs.iter().skip(1) {

if ix == cur_ix {

cur_cnt += 1;

} else {

if cur_cnt != appearances[cur_ix as usize] {

new_legs.push((cur_ix, cur_cnt));

}

cur_ix = ix;

cur_cnt = ix_cnt;

}

}

new_legs

}

impl ContractionProcessor {

fn new(

inputs: Vec>,

output: Vec,

size_dict: Dict,

track_flops: bool,

) -> ContractionProcessor {

if size_dict.len() > Ix::MAX as usize {

panic!("cotengrust: too many indices, maximum is {}", Ix::MAX);

}

let mut nodes: Dict = Dict::default();

let mut edges: Dict> = Dict::default();